Facebook is dying

While I only deleted my Twitter account at the end of last year, it’s been about 12 years since I deleted my Facebook one. As Cory Doctorow points out, it’s a terrible organisation that no-one should work for, and whose products no-one should use.

Even before its stock fell off a cliff, Facebook was mired in a multi-year hiring crisis. Nobody wanted to work for Facebook because it’s a terrible company that makes terrible products that everyone hates and only use because the company has rigged the system to punish users for switching.Source: I’ve been waiting 15 years for Facebook to die. I’m more hopeful than ever | The GuardianFacebook was already paying a wage premium, offering sweeteners to in-demand workers in exchange for checking their consciences at the door. Those sweeteners mostly took the form of shares, which means that all those morally flexible “Metamates” got a hefty pay-cut when the company’s stock price fell off a cliff. Expect a lot of them to leave – and expect the company to have to pay even more to replace them. Companies with falling share prices can’t use share grants to attract workers.

Facebook is now famously trying to pivot (ugh) to virtual reality to save itself. It’s an expensive gambit. It’s going to alienate a lot of its users. It’s going to alienate a lot of its in-demand workers. It’s going to freak out a lot of regulators.

Meanwhile, the switching costs for people who want to jump ship keep getting lower. It’s not merely that fewer and fewer of the people you want to talk with are still on Facebook. Even if there’s someone whose virtual company you can’t bear to part with, lawmakers in the US and Europe are working on legislation that would force Facebook to allow third parties to “federate” new services with it. That would mean that you could quit Facebook and join an upstart rival – say, one by a privacy-respecting nonprofit or even a user-owned co-op – and still exchange messages with the communities, customers and family you left behind on Facebook’s sinking ship.

Big Tech companies may change their names but they will not voluntarily change their economics

I based a good deal of Truth, Lies, and Digital Fluency, a talk I gave in NYC in December 2019, on the work of Shoshana Zuboff. Writing in The New York Times, she starts to get a bit more practical as to what we do about surveillance capitalism.

As Zuboff points out, Big Tech didn’t set out to cause the harms it has any more than fossil fuel companies set out to destroy the earth. The problem is that they are following economic incentives. They’ve found a metaphorical goldmine in hoovering up and selling personal data to advertisers.

Legislating for that core issue looks like it could be more fruitful in terms of long-term consequences. Other calls like “breaking up Big Tech” are the equivalent of rearranging the deckchairs on the Titanic.

Democratic societies riven by economic inequality, climate crisis, social exclusion, racism, public health emergency, and weakened institutions have a long climb toward healing. We can’t fix all our problems at once, but we won’t fix any of them, ever, unless we reclaim the sanctity of information integrity and trustworthy communications. The abdication of our information and communication spaces to surveillance capitalism has become the meta-crisis of every republic, because it obstructs solutions to all other crises.Source: You Are the Object of Facebook’s Secret Extraction Operation | The New York Times[…]

We can’t rid ourselves of later-stage social harms unless we outlaw their foundational economic causes. This means we move beyond the current focus on downstream issues such as content moderation and policing illegal content. Such “remedies” only treat the symptoms without challenging the illegitimacy of the human data extraction that funds private control over society’s information spaces. Similarly, structural solutions like “breaking up” the tech giants may be valuable in some cases, but they will not affect the underlying economic operations of surveillance capitalism.

Instead, discussions about regulating big tech should focus on the bedrock of surveillance economics: the secret extraction of human data from realms of life once called “private.” Remedies that focus on regulating extraction are content neutral. They do not threaten freedom of expression. Instead, they liberate social discourse and information flows from the “artificial selection” of profit-maximizing commercial operations that favor information corruption over integrity. They restore the sanctity of social communications and individual expression.

No secret extraction means no illegitimate concentrations of knowledge about people. No concentrations of knowledge means no targeting algorithms. No targeting means that corporations can no longer control and curate information flows and social speech or shape human behavior to favor their interests. Regulating extraction would eliminate the surveillance dividend and with it the financial incentives for surveillance.

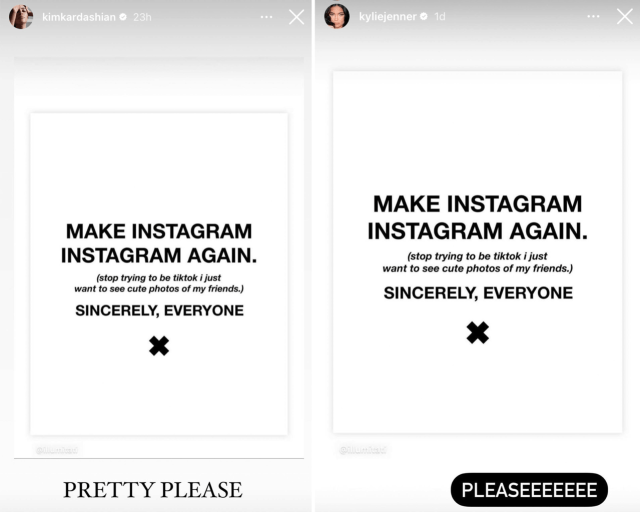

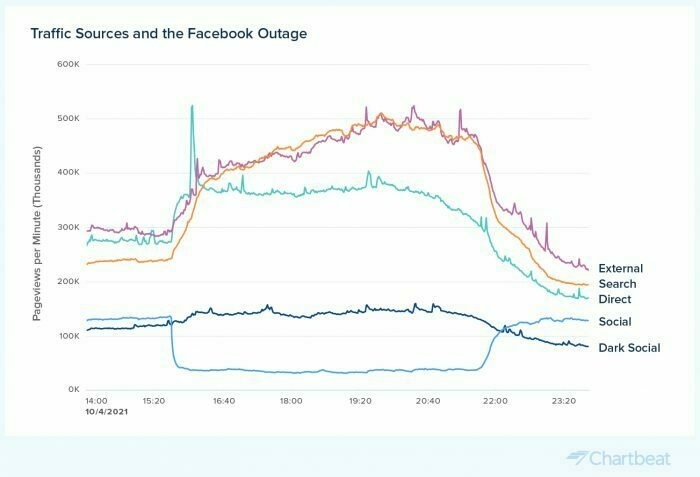

Traffic to news sites went up during the Facebook outage.

It’s really problematic that most people get their news via algorithmic news feeds.

On August 3, 2018, Facebook went down for 45 minutes. That’s a little baby outage compared to the one this week, when, on October 4, Facebook, Instagram, and WhatsApp were down for more than five hours. Three years ago, the 45-minute Facebook break was enough to get people to go read news elsewhere, Chartbeat‘s Josh Schwartz wrote for us at the time.Source: When Facebook went down this week, traffic to news sites went up » Nieman Journalism LabSo what happened this time around? For a whopping five-hours-plus, people read news, according to data Chartbeat gave us this week. (And they went to Twitter; Chartbeat saw Twitter traffic up 72%. If Bad Art Friend had been published on the same day as the Facebook outage, Twitter would have literally exploded, presumably.)

There are persons who, when they cease to shock us, cease to interest us

It's difficult not to say "I told you so" when things play out exactly as predicted. Four years ago, when Donald Trump was sworn in as the 45th President of the USA, many had ominous forebodings.

Donald Trump’s inaugural address was a declaration of war on everything represented by these choreographed civilities. President Trump – it’s time to begin to get used to those jarringly ill-fitting words – did not conjure a deathless phrase for the day. His words will not lodge in the brain in any of the various uplifting ways that the likes of Lincoln, Roosevelt, Kennedy or Reagan once achieved. But the new president’s message could not have been clearer. He came to shatter the veneer of unity and continuity represented by the peaceful handover. And he may have succeeded. In 1933, Roosevelt challenged the world to overcome fear. In 2017, Mr Trump told the world to be very afraid.

The Guardian view on Donald Trump’s inauguration: a declaration of political war (January 2017)

He was all bluster, we were told. That it was rhetoric and would never be followed up with action.

Leaders are judged by their first 100 days in office. Wikipedia has a page outlining what Trump did during his, including things that, looking back from the vantage point of 2021, seem like warning shots: rolling back gun control legislation, stoking fears around voter fraud, cracking down on illegal immigration, freezing federal job hiring (except military), and engaging in tax reform to the benefit of the rich.

As a History teacher, it always struck me as odd that Adolf Hitler, a man born in Austria with brown hair, managed to lead a fascist party that extolled the virtues of being German and having blond hair. These days, I'm equally baffled that some of the richest people in our society — Donald Trump, Nigel Farage, Jacob Rees-Mogg — can pass themselves off as 'anti-elite'.

Much of their ability to do so is by creating an alternative reality with the aid of social networks like Facebook, Twitter, and YouTube. These replace traditional gatekeepers to information with algorithms tweaked for engagement, attention, and profit.

As we know, whipping up hatred and peddling conspiracy theories puts these algorithms into overdrive, and ensure those who agree with the content see what's shared. But this approach also reaches those who don't agree with it, by virtue of people seeking to reject and push back on it. Meanwhile, of course, the platforms rake in $$$ from advertisers.

I get the feeling that there are a great number of people who do not understand the way the world works in 2021. I am probably one of them. In fact, given how much control we've given to algorithms in recent years, perhaps no-one truly understands.

One thing for sure, though, is that banning Donald Trump from Facebook and Instagram indefinitely is too little, too late. These platforms, among with others, downplayed his and other 'alt-right' hate speech for fear of being penalised.

Pandora's Box is open. Those who realise that everything is a construct and theory-laden will control those who don't. The latter will be reduced to merely wandering around an alternative reality, like protesters in Statuary Hall, waiting to be told what to do next.

Quotation-as-title by F.H. Bradley

Slowly-boiling frogs in Facebook's surveillance panopticon

I can't think of a worse company than Facebook than to be creating a IRL surveillance panopticon. But, I have to say, it's entirely on-brand.

On Wednesday, the company announced a plan to map the entire world, beyond street view. The company is launching a set of glasses that contains cameras, microphones, and other sensors to build a constantly updating map of the world in an effort called Project Aria. That map will include the inside of buildings and homes and all the objects inside of them. It’s Google Street View, but for your entire life.

Dave Gershgorn, Facebook’s Project Aria Is Google Maps — For Your Entire Life (OneZero)

We're like slowly-boiling frogs with this stuff. Everything seems fine. Until it's not.

The company insists any faces and license plates captured by Aria glasses wearers will be anonymized. But that won’t protect the data from Facebook itself. Ostensibly, Facebook will possess a live map of your home, pictures of your loved ones, pictures of any sensitive documents or communications you might be looking at with the glasses on, passwords — literally your entire life. The employees and contractors who have agreed to wear the research glasses are already trusting the company with this data.

Dave Gershgorn, Facebook’s Project Aria Is Google Maps — For Your Entire Life (OneZero)

With Amazon cosying up to police departments in the US with its Ring cameras, we really are hurtling towards surveillance states in the West.

Who has access to see the data from this live 3D map, and what, precisely, constitutes private versus public data? And who makes that determination? Faces might be blurred, but people can be easily identified without their faces. What happens if law enforcement wants to subpoena a day’s worth of Facebook’s LiveMap? Might Facebook ever build a feature to try to, say, automatically detect domestic violence, and if so, what would it do if it detected it?

Dave Gershgorn, Facebook’s Project Aria Is Google Maps — For Your Entire Life (OneZero)

Judges already requisition Fitbit data to solve crimes. No matter what Facebook say are their intentions around Project Aria, this data will end up in the hands of law enforcement, too.

More details on Project Aria:

To pursue the unattainable is insanity, yet the thoughtless can never refrain from doing so

💬 The Surprising Power of Simply Asking Coworkers How They’re Doing

🤔 Facebook Maybe Not Singlehandedly Undermining Democracy With Political Content, Says Facebook

👂 Unnervingly good entry in the "what languages sound like to non-speakers" genre

⚔️ Could a Peasant defeat a Knight in Battle?

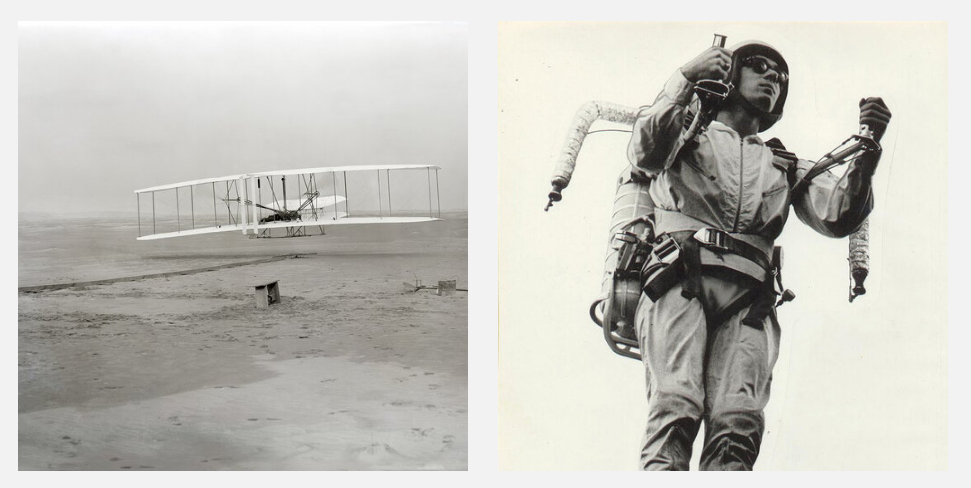

Quotation-as-title from Marcus Aurelius. Image from top-linked post.

Using WhatsApp is a (poor) choice that you make

People often ask me about my stance on Facebook products. They can understand that I don't use Facebook itself, but what about Instagram? And surely I use WhatsApp? Nope.

Given that I don't usually have a single place to point people who want to read about the problems with WhatsApp, I thought I'd create one.

WhatsApp is a messaging app that was acquired by Facebook for the eye-watering amount of $19 billion in 2014. Interestingly, a BuzzFeed News article from 2018 cites documents confidential documents from the time leading up to the acquisition that were acquired by the UK's Department for Culture, Media, and Sport. They show the threat WhatsApp posed to Facebook at the time.

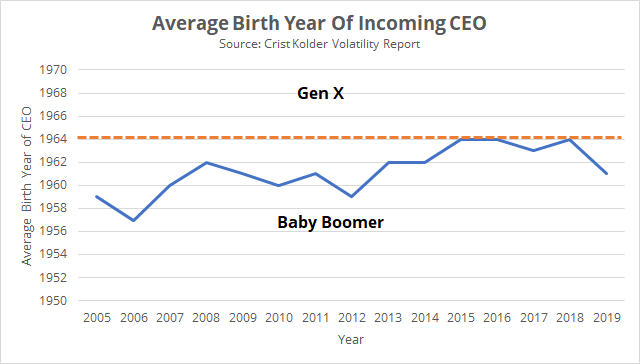

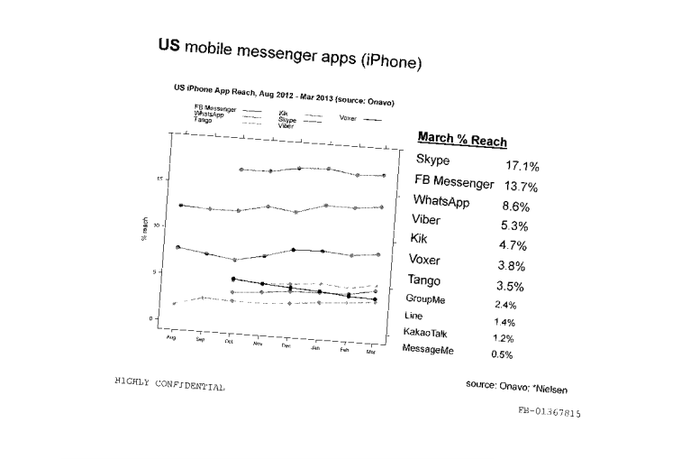

As you can see from the above chart, Facebook executives were shown in 2013 that WhatsApp (8.6% reach) was growing rapidly and posed a huge threat to Facebook Messenger (13.7% reach).

So Facebook bought WhatsApp. But what did they buy? If, as we're led to believe, WhatsApp is 'end-to-end encrypted' then Facebook don't have access to the messages of users. So what's so valuable?

Brian Acton, one of the founders of WhatsApp (and a man who got very rich through its sale) has gone on record saying that he feels like he sold his users' privacy to Facebook.

Facebook, Acton says, had decided to pursue two ways of making money from WhatsApp. First, by showing targeted ads in WhatsApp’s new Status feature, which Acton felt broke a social compact with its users. “Targeted advertising is what makes me unhappy,” he says. His motto at WhatsApp had been “No ads, no games, no gimmicks”—a direct contrast with a parent company that derived 98% of its revenue from advertising. Another motto had been “Take the time to get it right,” a stark contrast to “Move fast and break things.”

Facebook also wanted to sell businesses tools to chat with WhatsApp users. Once businesses were on board, Facebook hoped to sell them analytics tools, too. The challenge was WhatsApp’s watertight end-to-end encryption, which stopped both WhatsApp and Facebook from reading messages. While Facebook didn’t plan to break the encryption, Acton says, its managers did question and “probe” ways to offer businesses analytical insights on WhatsApp users in an encrypted environment.

Parmy Olson (Forbes)

The other way Facebook wanted to make money was to sell tools to businesses allowing them to chat with WhatsApp users. These tools would also give "analytical insights" on how users interacted with WhatsApp.

Facebook was allowed to acquire WhatsApp (and Instagram) despite fears around monopolistic practices. This was because they made a promise not to combine data from various platforms. But, guess what happened next?

In 2014, Facebook bought WhatsApp for $19b, and promised users that it wouldn't harvest their data and mix it with the surveillance troves it got from Facebook and Instagram. It lied. Years later, Facebook mixes data from all of its properties, mining it for data that ultimately helps advertisers, political campaigns and fraudsters find prospects for whatever they're peddling. Today, Facebook is in the process of acquiring Giphy, and while Giphy currently doesn’t track users when they embed GIFs in messages, Facebook could start doing that anytime.

Cory Doctorow (EFF)

So Facebook is harvesting metadata from its various platforms, tracking people around the web (even if they don't have an account), and buying up data about offline activities.

All of this creates a profile. So yes, because of end-ot-end encryption, Facebook might not know the exact details of your messages. But they know that you've started messaging a particular user account around midnight every night. They know that you've started interacting with a bunch of stuff around anxiety. They know how the people you message most tend to vote.

Do I have to connect the dots here? This is a company that sells targeted adverts, the kind of adverts that can influence the outcome of elections. Of course, Facebook will never admit that its platforms are the problem, it's always the responsibility of the user to be 'vigilant'.

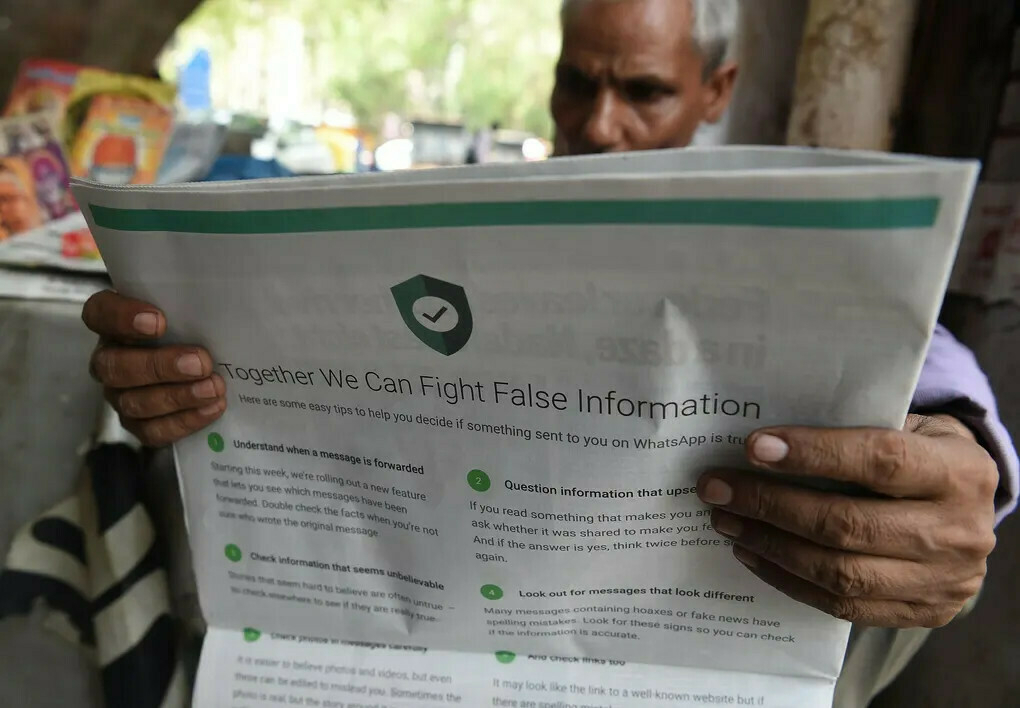

So you might think that you're just messaging your friend or colleague on a platform that 'everyone' uses. But your decision to go with the flow has consequences. It has implications for democracy. It has implications on creating a de facto monopoly for our digital information. And it has implications around the dissemination of false information.

The features that would later allow WhatsApp to become a conduit for conspiracy theory and political conflict were ones never integral to SMS, and have more in common with email: the creation of groups and the ability to forward messages. The ability to forward messages from one group to another – recently limited in response to Covid-19-related misinformation – makes for a potent informational weapon. Groups were initially limited in size to 100 people, but this was later increased to 256. That’s small enough to feel exclusive, but if 256 people forward a message on to another 256 people, 65,536 will have received it.

[...]

A communication medium that connects groups of up to 256 people, without any public visibility, operating via the phones in their pockets, is by its very nature, well-suited to supporting secrecy. Obviously not every group chat counts as a “conspiracy”. But it makes the question of how society coheres, who is associated with whom, into a matter of speculation – something that involves a trace of conspiracy theory. In that sense, WhatsApp is not just a channel for the circulation of conspiracy theories, but offers content for them as well. The medium is the message.

William Davies (The Guardian)

I cannot control the decisions others make, nor have I forced my opinions on my two children, who (despite my warnings) both use WhatsApp to message their friends. But, for me, the risk to myself and society of using WhatsApp is not one I'm happy with taking.

Just don't say I didn't warn you.

Header image by Rachit Tank

Saturday soundings

Black Lives Matter. The money from this month's kind supporters of Thought Shrapnel has gone directly to the 70+ community bail funds, mutual aid funds, and racial justice organizers listed here.

IBM abandons 'biased' facial recognition tech

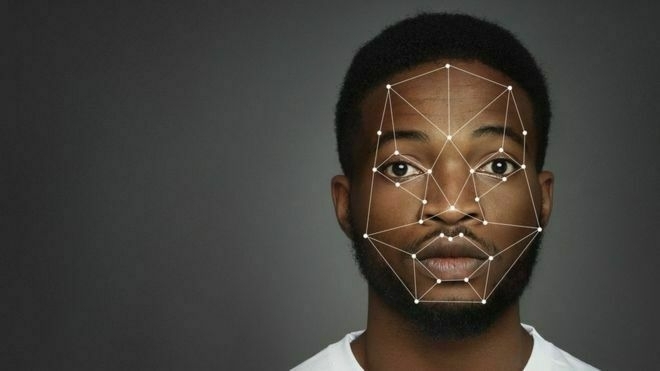

A 2019 study conducted by the Massachusetts Institute of Technology found that none of the facial recognition tools from Microsoft, Amazon and IBM were 100% accurate when it came to recognising men and women with dark skin.

And a study from the US National Institute of Standards and Technology suggested facial recognition algorithms were far less accurate at identifying African-American and Asian faces compared with Caucasian ones.

Amazon, whose Rekognition software is used by police departments in the US, is one of the biggest players in the field, but there are also a host of smaller players such as Facewatch, which operates in the UK. Clearview AI, which has been told to stop using images from Facebook, Twitter and YouTube, also sells its software to US police forces.

Maria Axente, AI ethics expert at consultancy firm PwC, said facial recognition had demonstrated "significant ethical risks, mainly in enhancing existing bias and discrimination".

BBC News

Like many newer technologies, facial recognition is already a battleground for people of colour. This is a welcome, if potential cynical move, by IBM who let's not forget literally provided technology to the Nazis.

How Wikipedia Became a Battleground for Racial Justice

If there is one reason to be optimistic about Wikipedia’s coverage of racial justice, it’s this: The project is by nature open-ended and, well, editable. The spike in volunteer Wikipedia contributions stemming from the George Floyd protests is certainly not neutral, at least to the extent that word means being passive in this moment. Still, Koerner cautioned that any long-term change of focus to knowledge equity was unlikely to be easy for the Wikipedia editing community. “I hope that instead of struggling against it they instead lean into their discomfort,” she said. “When we’re uncomfortable, change happens.”

Stephen Harrison (Slate)

This is a fascinating glimpse into Wikipedia and how the commitment to 'neutrality' affects coverage of different types of people and event feeds.

Deeds, not words

Recent events have revealed, again, that the systems we inhabit and use as educators are perfectly designed to get the results they get. The stated desire is there to change the systems we use. Let’s be able to look back to this point in two years and say that we have made a genuine difference.

Nick Dennis

Some great questions here from Nick, some of which are specific to education, whereas others are applicable everywhere.

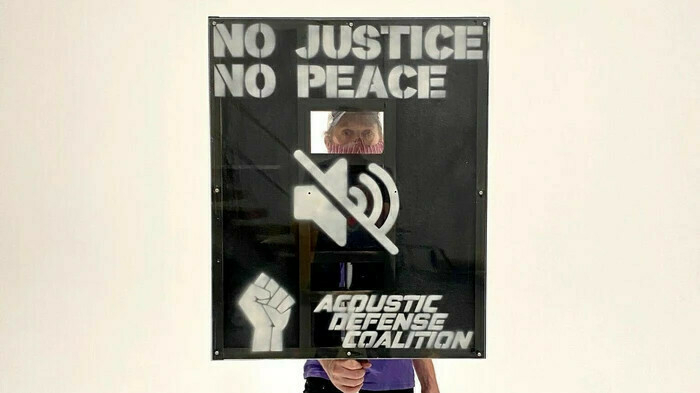

Audio Engineers Built a Shield to Deflect Police Sound Cannons

Since the protests began, demonstrators in multiple cities have reported spotting LRADs, or Long-Range Acoustic Devices, sonic weapons that blast sound waves at crowds over large distances and can cause permanent hearing loss. In response, two audio engineers from New York City have designed and built a shield which they say can block and even partially reflect these harmful sonic blasts back at the police.

Janus Rose (Vice)

For those not familiar with the increasing militarisation of police in the US, this is an interesting read.

CMA to look into Facebook's purchase of gif search engine

The Competition and Markets Authority (CMA) is inviting comments about Facebook’s purchase of a company that currently provides gif search across many of the social network’s competitors, including Twitter and the messaging service Signal.

[...]

[F]or Facebook, the more compelling reason for the purchase may be the data that Giphy has about communication across the web. Since many services that integrate with the platform not only use it to find gifs, but also leave the original clip hosted on Giphy’s servers, the company receives information such as when a message is sent and received, the IP address of both parties, and details about the platforms they are using.

Alex Hern (The Guardian)

In my 2012 TEDx Talk I discussed the memetic power of gifs. Others might find this news surprising, but I don't think I would have been surprised even back then that it would be such a hot topic in 2020.

Also by the Hern this week is an article on Twitter's experiments around getting people to actually read things before they tweet/retweet them. What times we live in.

Human cycles: History as science

To Peter Turchin, who studies population dynamics at the University of Connecticut in Storrs, the appearance of three peaks of political instability at roughly 50-year intervals is not a coincidence. For the past 15 years, Turchin has been taking the mathematical techniques that once allowed him to track predator–prey cycles in forest ecosystems, and applying them to human history. He has analysed historical records on economic activity, demographic trends and outbursts of violence in the United States, and has come to the conclusion that a new wave of internal strife is already on its way1. The peak should occur in about 2020, he says, and will probably be at least as high as the one in around 1970. “I hope it won't be as bad as 1870,” he adds.

Laura Spinney (Nature)

I'm not sure about this at all, because if you go looking for examples of something to fit your theory, you'll find it. Especially when your theory is as generic as this one. It seems like a kind of reverse fortune-telling?

Universal Basic Everything

Much of our economies in the west have been built on the idea of unique ideas, or inventions, which are then protected and monetised. It’s a centuries old way of looking at ideas, but today we also recognise that this method of creating and growing markets around IP protected products has created an unsustainable use of the world’s natural resources and generated too much carbon emission and waste.

Open source and creative commons moves us significantly in the right direction. From open sharing of ideas we can start to think of ideas, services, systems, products and activities which might be essential or basic for sustaining life within the ecological ceiling, whilst also re-inforcing social foundations.

TessyBritton

I'm proud to be part of a co-op that focuses on openness of all forms. This article is a great introduction to anyone who wants a new way of looking at our post-COVID future.

World faces worst food crisis for at least 50 years, UN warns

Lockdowns are slowing harvests, while millions of seasonal labourers are unable to work. Food waste has reached damaging levels, with farmers forced to dump perishable produce as the result of supply chain problems, and in the meat industry plants have been forced to close in some countries.

Even before the lockdowns, the global food system was failing in many areas, according to the UN. The report pointed to conflict, natural disasters, the climate crisis, and the arrival of pests and plant and animal plagues as existing problems. East Africa, for instance, is facing the worst swarms of locusts for decades, while heavy rain is hampering relief efforts.

The additional impact of the coronavirus crisis and lockdowns, and the resulting recession, would compound the damage and tip millions into dire hunger, experts warned.

Fiona Harvey (The Guardian)

The knock-on effects of COVID-19 are going to be with us for a long time yet. And these second-order effects will themselves have effects which, with climate change also being in the mix, could lead to mass migrations and conflict by 2025.

Mice on Acid

What exactly a mouse sees when she’s tripping on DOI—whether the plexiglass walls of her cage begin to melt, or whether the wood chips begin to crawl around like caterpillars—is tied up in the private mysteries of what it’s like to be a mouse. We can’t ask her directly, and, even if we did, her answer probably wouldn’t be of much help.

Cody Kommers (Nautilus)

The bit about 'ego disillusion' in this article, which is ostensibly about how to get legal hallucinogens to market, is really interesting.

Header image by Dmitry Demidov