Intermission

Update: I’m posting notes, ideas, and reflections on a new webapp I’ve created called Substrate. There’s an RSS feed but no newsletter.

[C]urrent optimization is long-term anachronism. More here.

Image: City of Vancouver Archives

The Art of Money Getting

I didn’t realise that PT Barnum was 60 years old when he co-founded the travelling show that became the Barnum & Bailey Circus. And he was 70 when he turned one of his lectures into a book called The Art of Money Getting.

Core Principles

- Don’t Mistake Your Vocation

Barnum’s first rule: pick the work you’re built for, then aim to be the best at it. Most people get this backward. They take whatever job pays and spend decades fighting upstream. The people who succeed have a knack for what they do. Find your knack first.

- Avoid Debt Like the Plague

Debt eats self-respect. Barnum says young people, especially, should avoid it. The moment you owe somebody money, you’ve handed them a piece of your freedom. The whole game is keeping income above outgo.

- Whatever You Do, Do It With All Your Might

Half-doing is expensive. Barnum watched neighbors spend whole lifetimes poor because they only kind of worked, while somebody else got rich doing the same job thoroughly. The people who go all in pull ahead of the ones who don’t.

- Preserve Your Integrity

Nobody buys from someone they don’t trust. You can be the friendliest merchant in town, but if a customer suspects you of cheating, they’ll walk to the next shop. Dishonesty might pay this week. It costs you over a lifetime. Reputation is the actual asset.

Source: Cool Tools

Image: Public Domain Pictures

We are mature in one realm, childish in another

We do not grow absolutely, chronologically. We grow sometimes in one dimension, and not in another; unevenly. We grow partially. We are relative. We are mature in one realm, childish in another. The past, present, and future mingle and pull us backward, forward, or fix us in the present. We are made up of layers, cells, constellations.

– Anaïs Nin

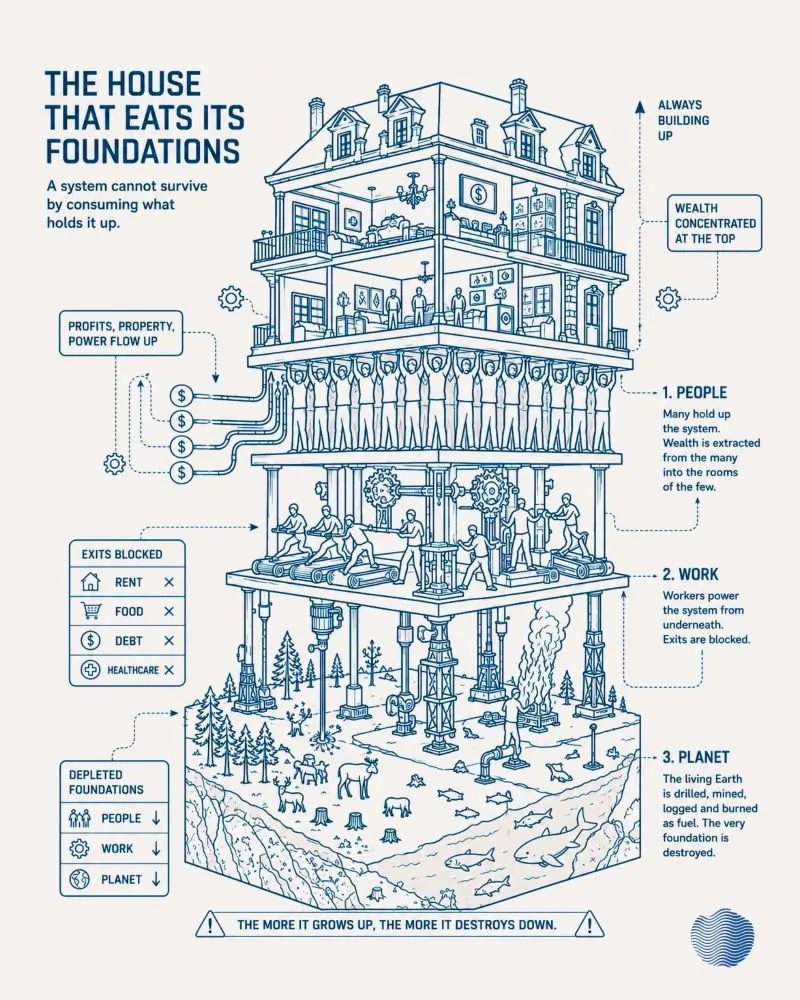

The house that eats its foundations

I really like this diagram, which uses as a touchstone classic pyramid of the capitalist system cartoons. The twist is that it’s not making a point about workers but about the planet as a whole.

Source: LinkedIn

Reprogramming the athlete’s visual system to function at peak efficiency

I learned about OKKULO at the Thinking Digital conference. I might be biased because the inventor is local and it’s helping my team (Sunderland) but this looks incredible.

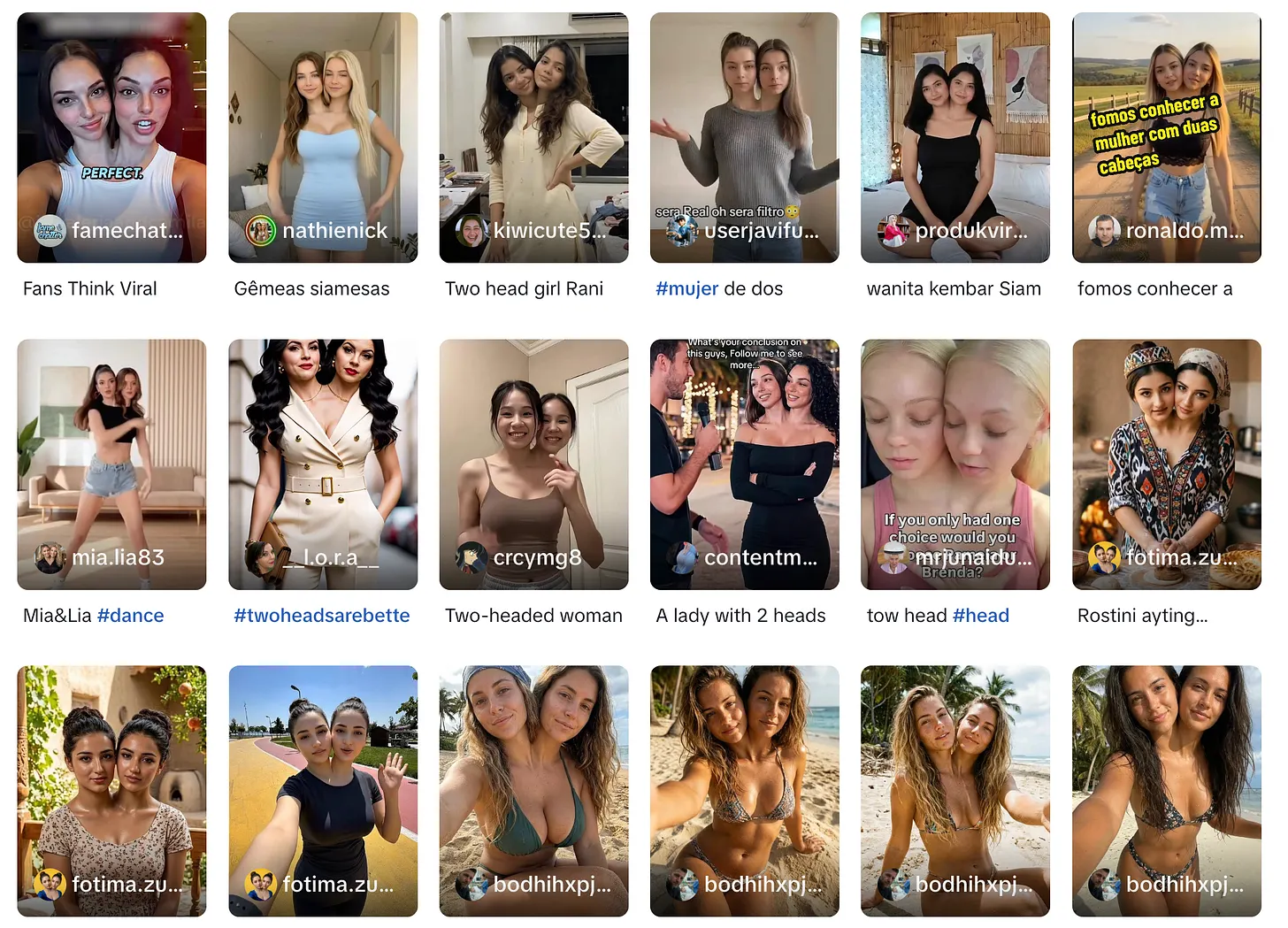

New genres are emerging that exploit cognitive and moral resources in ways that did not have technological pathways before

Marcus Bösch with a really interesting insight into the aesthetics of TikTok through the use of some weird examples. He uses the work of Steyerl (2023), Meyer (2025), and Toister & Zylinska (2025) to explain it all. Well worth clicking through and reading the whole thing.

Slop strategies have been evolving ever since Jesus sat down on a huge shrimp in 2024. AI content production is now sophisticated enough to invent new affective hooks that did not previously exist.

New genres are emerging that exploit cognitive and moral resources in ways that did not have technological pathways before.

[…]

Roland Meyer argues that AI-generated images are optimised not against the real world but against other images already in circulation. They are trained on billions of platform images and rewarded for matching what viewers already expect to see. Meyer, building on a term from Jacob Birken, calls this platform realism — a realism that has nothing to do with reality and everything to do with image-familiarity.

[…]

Meyer adds one more thing worth keeping. AI-generated images, he points out, are “disposable by design.” Producers generate dozens to find one that engages. Most are never seen.

[…]

Every time you see an AI-generated image — even one you know is fake, even one you mock or scroll past fast — the type it represents becomes a tiny bit more familiar to your imagination. Across thousands of variants seen by millions of viewers, this slowly changes what feels normal, what feels imaginable, what counts as a recognisable shape in the world.

[…]

We are still at the beginning of a transformative technology whose effects we can barely measure — partly because the changes sit just below the threshold of attention. A weird video here, a strange video there, and yet each one is part of a process that is shifting how we consume media and what we treat as something ordinary.

Source: Understanding TikTok

Drug testing on brains hovering between life and death

I can see this going the same way as the abortion debate. I know what I, personally, think about this (once I’m dead, I’m dead, and no amount of electrical stimulation is going to reanimate my consciousness) but I think there will be significant pushback from people who have perhaps read a little too much Mary Shelley.

Just a day ago, the brain was in a living person. Now, hours after its owner died, it sits on a cart draped in tubes that quiver as they pump liters of blood substitute and other fluids through the organ, supplying oxygen and removing waste. With most of its key functions intact but its electrical activity quenched by anesthesia, the brain hovers between life and death. As it metabolizes experimental drugs, sensors record its reactions, capturing hundreds of data points on its cells, proteins, and physiology. Then, after 24 hours in this state, it will be sliced into hundreds of pieces for more detailed study.

The brain is one of more than 700 that the 5-year-old biotech startup Bexorg has nurtured and studied using a set of proprietary brain-sustaining machines it calls BrainEx. The platform grants researchers an intimate look into how potential therapies might work inside brains with neurodegenerative diseases such as Parkinson’s, Alzheimer’s, or amyotrophic lateral sclerosis. Bexorg can biopsy the brains and discover how long a drug stays in cells, whether it hits its molecular target, and any hints of side effects.

The system promises far more realistic conditions for testing drugs than lab animals or cells in a dish, its developers say. Whole brains come with decades of environmental exposures, histories of drug treatments, and unique genetics that can affect responses to experimental medicines, says physician Zvonimir Vrselja, one of Bexorg’s founders and CEO. “You get cells that have been there for 60 to 80 years.”

[…]

The brains are already almost devoid of the coordinated neural firing necessary even for minimal consciousness, says Brendan Parent, a bioethicist at New York University Langone Health and one of six ethicists on Bexorg’s advisory board. But the company also forestalls any electrical activity with the anesthetic propofol, among other measures. Bexorg obtains brains in partnership with organizations that procure donated organs for transplantation, and Vrselja says once families understand the company’s process and goals, their response is overwhelmingly positive.

[…]

The company is also developing a machine learning model called NeuroLens that acts as a “virtual brain,” trained on the brain readouts, donors’ medical records, and protein and microscopy data from brain tissue. The model could eventually allow researchers to test new drug molecules before even going into a physical brain. In this virtual form, the pampered brains in Bexorg’s lab will live on even after their life support is withdrawn.

Source: Science

Image: Milad Fakurian

Why do people procrastinate?

I am not, you will be unsurprised to hear, a procrastinator. But I do procrastinate, and used to do so much more when I was younger.

This podcast episode from 2020, recommended to me this week so that I could better understand where someone was coming from, is absolutely fantastic. I learned so much, and the enthusiasm of both the host and interviewee is infectious.

Source: Ologies with Alie Ward

Field Notes on Productive Friction

I’m making my first zine, coming Q3 2026. There’s only going to be 50 physical copies, no digital version, and I’ve already sold ~25% of them.

If you want one, please ensure you choose the correct postage option for your location.

A pocket guide to noticing the frictionless tools that remove your agency, containing eight patterns of capture, plus one self-administered audit. Making the case for reflection over convenience in a world of doomscrolling.

Source: Cognitive Wallpaper

I have led a toothless life, he thought. A toothless life. I have never bitten into anything. I was waiting. I was reserving myself for later on—and I have just noticed that my teeth have gone.

– Jean-Paul Sartre, The Age of Reason

Digital legacy

I went to a MozAlums workshop run by Ian Forrester on Wednesday which discussed ‘digital legacy’. There was some really interesting discussion about how and when we delete our contacts, deal with digital artefacts after the passing of people we know, and automating some of the things that happen after we leave this mortal coil…

Source: Are.na

The impact of volcanoes on the Black Death

Scratch the surface, and underneath I’m still an enthusiastic History teacher; I’ve just no students to teach. So I find things like this Open Culture article, which helps piece together how the Black Death came to wipe out ~50% of Europe’s population in the 14th century, fascinating.

The impact of the COVID-19 pandemic, and the recent war in Iran, with associated global shocks, are legible to us as we live in a global society based on scientific understanding. That wasn’t true ~650 years ago, so understanding how it all came about, and the impact it had, takes painstaking work.

If new findings by researchers from the University of Cambridge and the Leibniz Institute for the History and Culture of Eastern Europe are to be believed, a volcano’s eruption helped lead to the outbreak and spread of the Black Death across Europe in the fourteenth century. In the video above, British history and environmental science specialist Paul Whitewick explains the evidence on a visit to one of the abandoned medieval villages stricken by that plague.

As Cambridge’s Sarah Collins writes, “the evidence suggests that a volcanic eruption — or cluster of eruptions — around 1345 caused annual temperatures to drop for consecutive years due to the haze from volcanic ash and gases, which in turn caused crops to fail across the Mediterranean region.” Desperate Italian city-states thus fell back on trading with grain producers around the Black Sea. “This climate-driven change in long-distance trade routes helped avoid famine, but in addition to life-saving food, the ships were carrying the deadly bacterium that ultimately caused the Black Death, enabling the first and deadliest wave of the second plague pandemic to gain a foothold in Europe.”

An important clue came in the form of “information contained in tree rings from the Spanish Pyrenees, where consecutive ‘Blue Rings’ point to unusually cold and wet summers in 1345, 1346 and 1347 across much of southern Europe.” Records of lunar eclipses and layers of sulfur locked into ice cores dating to about the same time further heighten the probability of volcanic activity. Key to tying these disparate pieces of evidence together are changes in trade routes: on a map, Whitewick traces “movement increasing along these corridors, grain imports to the maritime republics of Venice and Genoa from north of the Black Sea and beyond, in 1347.” According to written records, the Black Death came to Britain the following year, arriving in “a country already shaped by failed harvests, weakened communities, and rising movement of people and goods.”

Source: Open Culture

Image: Wikimedia Commons

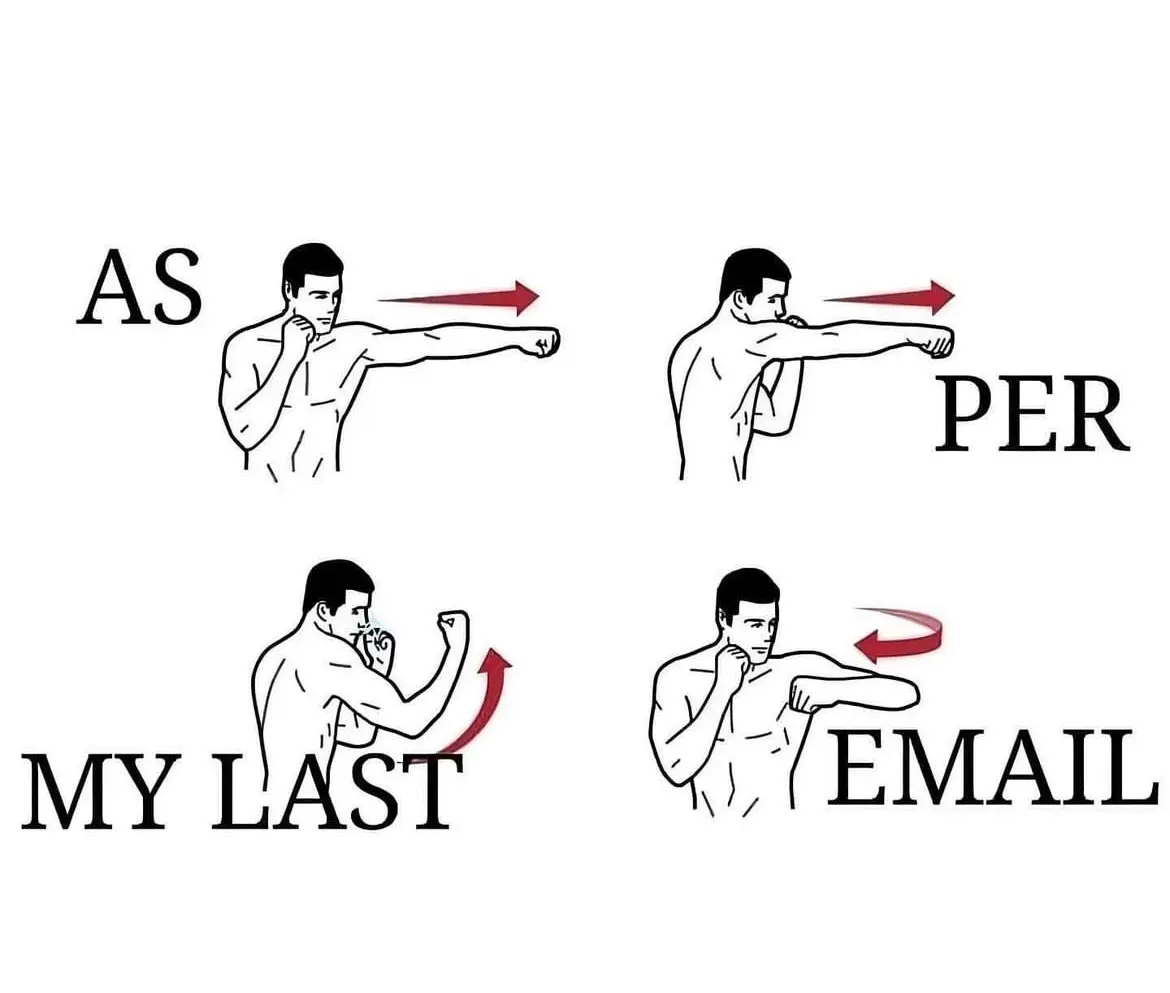

Don't write in the passive voice

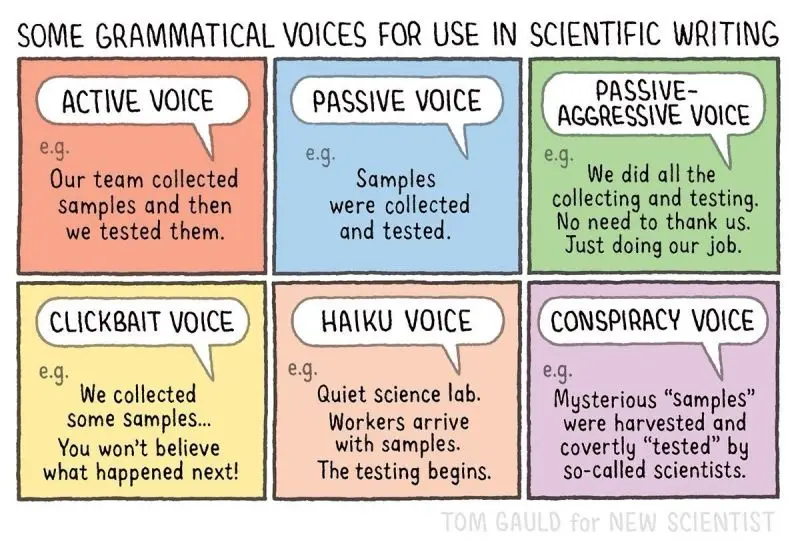

When I was 14 years old, I was told by my English teacher that I shouldn’t write in the ‘passive voice’. That advice has stuck with me ever since, as once you’ve see it, you see it everywhere.

So I found this, by Tom Gauld, both funny and useful in terms of showing other people (including my kids) what I mean about the ways in which things can be written.

As someone pointed out in the comments, Gauld could have added ‘AI voice’. But then, he could have also added ‘LinkedIn voice’ (and so on, ad infinitum…)

Source: LinkedIn

There are always people who fall outside the bounds of what a service can handle

I’m grateful to Tom Watson for sending me this link to Richard Pope’s blog, author of the book Platformland. Pope talks about specific examples from UK public services to make the point that starting with very specific use cases is problematic.

First, because expecting them to scale to other use cases is a form of magical thinking; second, because people change over time.

The first problem with use-cases is that, because they limit the chaos, when you scale you often discover that you have scaled a service that only works for those use-cases. You then have to confront the chaos at scale. Many of the hardest delivery problems or riskiest assumptions may go untested until it is too late.

[…]

The second problem with use-cases, is that complexity is not static. The needs of users change because people’s lives change, political priorities shift, technology evolves. Like levees on a river, use-cases create coherence in the landscape by holding back an inherently chaotic system. However, at some point, levees can get overwhelmed.

[…]

Most services with tightly defined use-cases experience this in some shape or form. There are always people who fall outside the bounds of what a service can handle. And if it’s a public service, not serving these users is not an option.

Source: Platformland

Image: Richard Bell