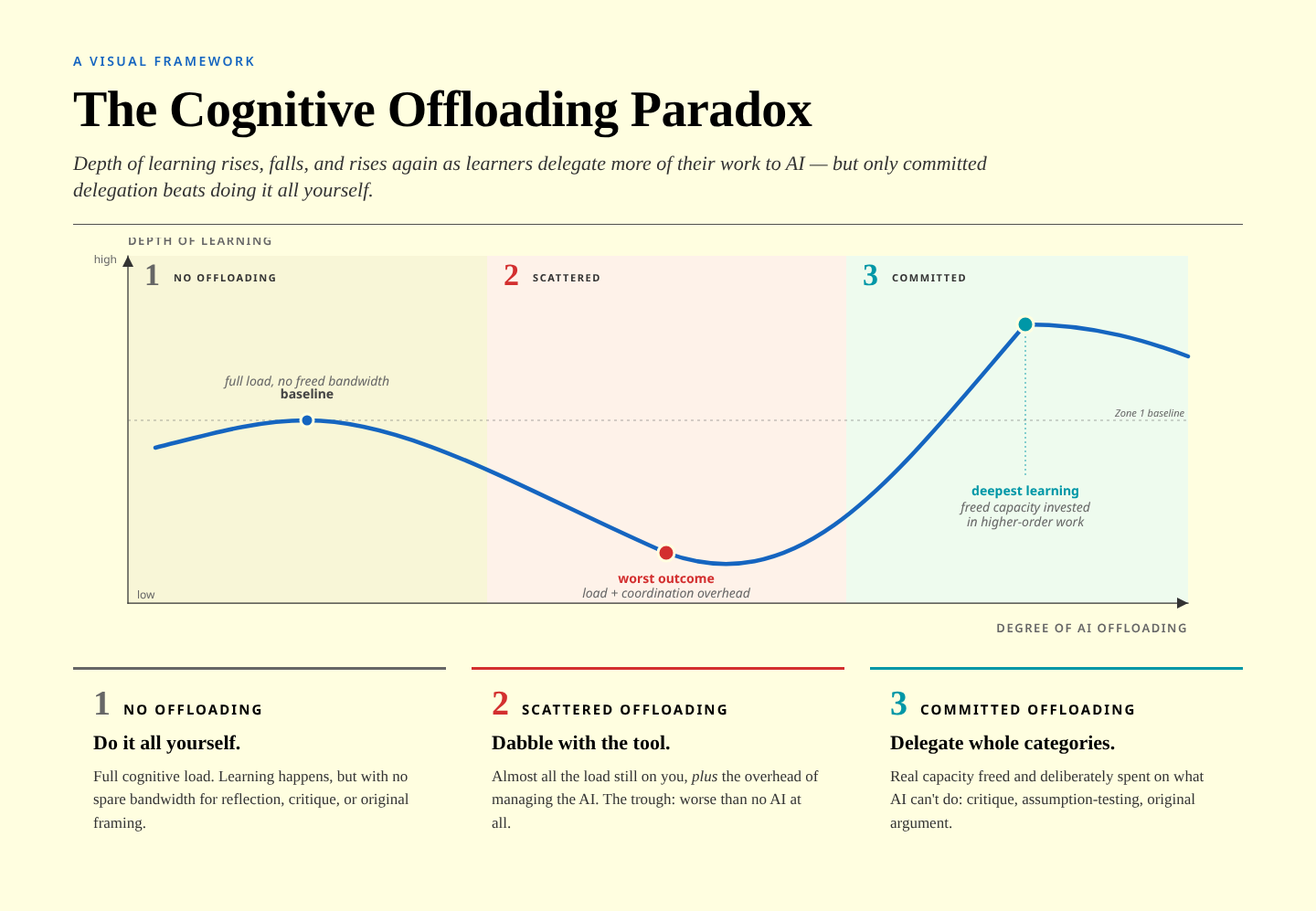

The "U-shaped curve" of cognitive offloading to AI tools

Almost a year ago, I responded here on Thought Shrapnel to what I thought was a terrible paper which claimed to show, via brain scans, that using LLMs was bad for students' cognitive development.

As Philippa Hardman notes in this article, the academic literature has begun caught up with what people actually using these tools already know:

The theoretical picture sharpened in 2025–26. Favero et al. (2025) warned that cognitive offloading undermines learning outcomes unless the mental effort that’s freed up gets redirected towards other meaningful tasks.

Then, in March 2026, Lodge & Loble went a step further, arguing that cognitive offloading isn’t inherently harmful to learners — what matters is whether it’s beneficial or detrimental, and the difference depends entirely on what happens with the freed-up cognitive capacity.

So over the course of 2025 and into 2026, the field was starting to move beyond “AI is bad for learning” toward a harder question: when is it bad, and when might it actually help? But the empirical evidence to answer that question — across a large sample, across cultures, with a clear mechanism — didn’t exist yet.

It turns out that context matters, as does your mental model of what you can/should do with a tool. The scientific detail is in Hardman’s post, but I like her summary:

Zone 1 — No offloading. The learner does everything manually. AI isn’t part of the process. They carry the full cognitive load: reading every source, writing every draft, organising every dataset. Learning happens, but it’s slow and capacity-constrained. There’s no freed-up bandwidth for higher-order reflection because every minute is spent on execution.

Zone 2 — Scattered, half-hearted offloading. The learner uses AI for a bit here and there — fixing a sentence, checking a fact, tidying a paragraph. This is where most current AI use in learning sits, and it’s the worst zone. The learner is still carrying almost all of the cognitive load, but now they’ve added the friction of managing the AI: deciding what to ask, evaluating whether the output is useful, switching between their own work and the tool. More effort, no meaningful benefit. This is what the negative studies measured.

Zone 3 — Committed, strategic offloading. The learner delegates entire categories of substantive work to AI: all the source summarisation, the full first-pass literature review, the complete data organisation. The cognitive savings are large enough to genuinely free capacity — and that freed capacity gets invested in the work AI can’t do: critiquing frameworks, questioning assumptions, constructing original arguments, making judgement calls. This is where the paradox kicks in. This is where transformative learning lives.

So, essentially, there’s a “U-shaped curve” of adaptation, as anyone familiar with Charles Handy’s Sigmoid Curve will be aware. It’s definitely worth clicking through to read Hardman’s “Cheat Sheet” which helps reframe learning activities.

Source: Dr Phil’s Newsletter

Image: Claude Opus 4.7