2023

Best of Thought Shrapnel 2023

Hello hello. I hope you're well 🙂

According to my stats, the following posts, all published in the last 12 months, were the most accessed on Thought Shrapnel.

What were your favourites? Is it one on this list? The archives can be found here.

1. The burnout curve

Published: 11th September

I stumbled across this on LinkedIn. There doesn’t seem to be an authoritative source yet other than the author’s (Nick Petrie) social media posts, which is a shame. So I’m quoting most of it here so I can find and refer to it in future.

2. AI writing detectors don't work

Published: 9th September

This article discusses OpenAI’s recent admission that AI writing detectors are ineffective, often yielding false positives and failing to reliably distinguish between human and AI-generated content. They advise against the use of automated AI detection tools, something that educational institutions will inevitably ignore.

3. Oh great, another skills passport

Published: 25th September

This not only is the wrong metaphor, but it diverts money and attention from fixing some of the real issues in the system.

4. Good news on Covid treatments

Published: 16th September

Well this is promising. Researchers have identified a critical weakness in COVID-19 in its reliance on specific human proteins for replication. The virus has an “N protein” which needs human cells to properly package its genome and propagate. Apparently, blocking this interaction could prevent the virus from infecting human cells.

5. The punishment for being authentic is becoming someone else's content

Published: 9th September

What I think is interesting is how online and offline used to be seen as completely separate. Then we realised the impact that offline life had on online life, and now we’re seeing the reverse: Instagram, TikTok, etc. having a huge impact on the spaces in which we exist offline.

6. Using AI to aid with banning books is another level of dystopia

Published: 17th August

However, what I’m concerned about is AI decision-making. In this case, a crazy law is being implemented by people who haven’t read the books in questions who outsource the decision to a language model that doesn’t really understand what’s being asked of it.

7. A philosophy of travel

Published: 30th August

This article critically examines the concept of travel, questioning its oft-claimed benefits of ‘enlightenment’ and ‘personal growth’. It cites various thinkers who have critiqued travel (including one of my favourites, Fernando Pessoa) suggesting that it can actually distance us from genuine human connection and meaningful experiences.

8. We need to talk about AI porn

Published: 25th August

As this article details, a lot of porn has already been generated. Again, prudishness aside relating to people’s kinks, there are all kind of philosophical, political, legal, and issues at play here. Child pornography is abhorrent; how is our legal system going to deal with AI generated versions? What about the inevitable ‘shaming’ of people via AI generated sex acts?

9. Update your profile photo at least every three years

Published: 11th January

I think this is good advice. I try to update mine regularly, although I did realise that last year I chose a photo that was five years old! I prefer ‘natural’ photos that are taken in family situations which I then edit, rather than headshots these days.

10. Britain is screwed

Published: 8th February

I followed a link from this article to some OECD data which, as shown in the chart below, the UK has even lower welfare payments that the US. The economy of our country is absolutely broken, mainly due to Brexit, but also due to the chasm between everyday people and the elites.

Have a happy new year when it arrives!

PS I've given up on Substack and, because I'm tired of moving platforms, I think I'll just send out emails via this site for now. More news on that soon.

Back next year!

That's it for Thought Shrapnel for 2023. Make sure you're subscribed for when we're back next year! (RSS / newsletter)

Image: Unsplash

Avoiding the 'Dark Triads'

Arthur C. Brooks, whose writing I always enjoy, writes on sociopaths, narcissists, and ‘Dark Triad’ personalities. These Dark Triads are characterised by narcissism, Machiavellianism, and psychopathy. They’re manipulative and harmful, and making up about 7% of the population — although interestingly significantly more of the male prison population.

Brooks talks about how to spot and avoid them in the workplace and on social media, and how to gravitate towards ‘Light Triad’ personalities instead. These embody positive traits like faith in humanity and humanism, and represent a more uplifting aspect of human nature. Thankfully, Light Triads are more common in the general population.

As far as the workplace is concerned, scholars have found that narcissists tend toward artistic, creative, and social careers; researchers also saw that Machiavellians, in particular, avoid careers that involve caring for others. Look out for Dark Triads, in other words, in professions that involve human contact, performance, and applause, but little concerned attention to other people. An obvious example might be politics; another would be show business. But the type can manifest in many careers and professions. At work, such individuals tend to exaggerate their own worth, show a distrustful attitude toward colleagues, act impulsively and irresponsibly, break rules, and lie.Source: The Sociopaths Among Us—And How to Avoid Them | The Atlantic[…]

The traits to look for are self-importance, a sense of entitlement, vanity, a victim mentality, a tendency to bend the truth or even openly lie, manipulativeness, grandiosity, a lack of remorse, and an absence of empathy. Probe for these characteristics particularly when on first dates and in job interviews. You might even want to take that test imaginatively on behalf of someone you suspect may have Triad traits and see what result you get.

Image: DALL-E 3

The 9-5 shift is a relatively recent invention

As a Xennial, I have all of the guilt for not working hard enough — along with a desire to live a life more fulfilling and holistic than my parents. Generations below, including Gen Z and then of course my kids, think that working all of the hours is a bit crazy.

This article is about a viral TikTok video of a Gen Z ‘girl’ (although surely ‘young woman’?) crying because the 9-5 grind is “crazy… How do you have friends? How do you have time for dating? I don’t have time for anything, I’m so stressed out.”

It’s easy, as with so many things, for older generations to inflict on generations coming after them the crap that they themselves have had to deal with. But it doesn’t have to be this way. As the article says, the 9-5 job is a relatively recent invention and I, for one, don’t follow that convention.

When the video – which has been viewed nearly 50 million times across TikTok and Twitter – first started to spread, the comments weren’t sympathetic. She was trashed by neoliberal hustle and grind stans – most of whom seemed old enough to be her parents. “Gen Z girl finds out what a real job is like,” one X (formerly Twitter) user sneered. “Grown-ups don’t prioritise friends, or dating. Grown-ups prioritise being able to provide,” another commenter wrote, neglecting the fact that if you’re young, single, and have no friends, there isn’t really anyone to “provide” for.Source: Nobody Wants Their Job to Rule Their Lives Anymore | VICEBut then the tide began to turn. People started to point out that “Gen Z girl” was right, actually. Work sucks! No one has any time for anything! Within days, she had become the figurehead for an increasingly common sentiment: We don’t want our lives to revolve around work anymore.

[…]

It doesn’t feel like an exaggeration to say young people have been gaslit by older generations when it comes to work. As wages stagnate and costs rise, the generation that got free university education and cheap housing have somehow convinced young people that if we’re sad and stressed then it’s simply a problem with our work ethic. We’re too sensitive, entitled, or demanding to hold down a “real job”, the story goes, when really most of us just want a decent night’s sleep and less debt.

[…]

It’s always worth reminding ourselves that the 9-5 shift is itself a relatively recent invention, not some sort of eternal truth, and hopefully soon we’ll see it as a relic from a bygone age. “It was set up to support our patriarchal society – men went to work and women stayed at home to cook and look after the family,” says Emma Last, founder of the workplace wellbeing programme Progressive Minds. “Things have obviously changed a lot since then, and we’re trying to find the balance between cooking meals, looking after ourselves, spending time with family and friends, and having relationships. Isn’t it a good thing that Gen Z are questioning it all?”

Towards an epistemology of the humanities

In the past decade a new field called the history of the humanities has been assembled out of pieces previously belonging to the history of learning, disciplinary histories, the history of science, and intellectual history. The new specialty tends to be more widely cultivated in languages that had never narrowed their vernacular cognates of the Latin scientia to refer only to the natural sciences, such as those of Dutch and German. So far, its practitioners have not been particularly interested in questions of epistemology. But just as the history of science has long served as a stimulus and sparring partner to the philosophy of science, perhaps the history of the humanities will eventually engender a philosophical counterpart. Even if it did, though, the question would remain: What would be the point? Just as many scientists query the need for an epistemology of science, many humanists may find an epistemology of the humanities superfluous: we know how to do what we do, and we’ll just get on with it, thank you very much.Source: How We Know What We Know | In the MomentI’m not so sure we really know how we know what we know. And even if we did, a great number of intelligent, well-educated people, our ideal readers and potential students, even our colleagues in other departments, wonder why what we teach and write counts as knowledge. The first step in justifying our ways of knowing to these doubters would be to justify them to ourselves.

Image: DALL-E 3

More like Grammarly than Hal 9000

I’m currently studying towards an MSc in Systems Thinking and earlier this week created a GPT to help me. I fed in all of the course materials, being careful to check the box saying that OpenAI couldn’t use it to improve their models.

It’s not perfect, but it’s really useful. Given the extra context, ChatGPT can not only help me understand key concepts on the course, but help relate them more closely to the overall context.

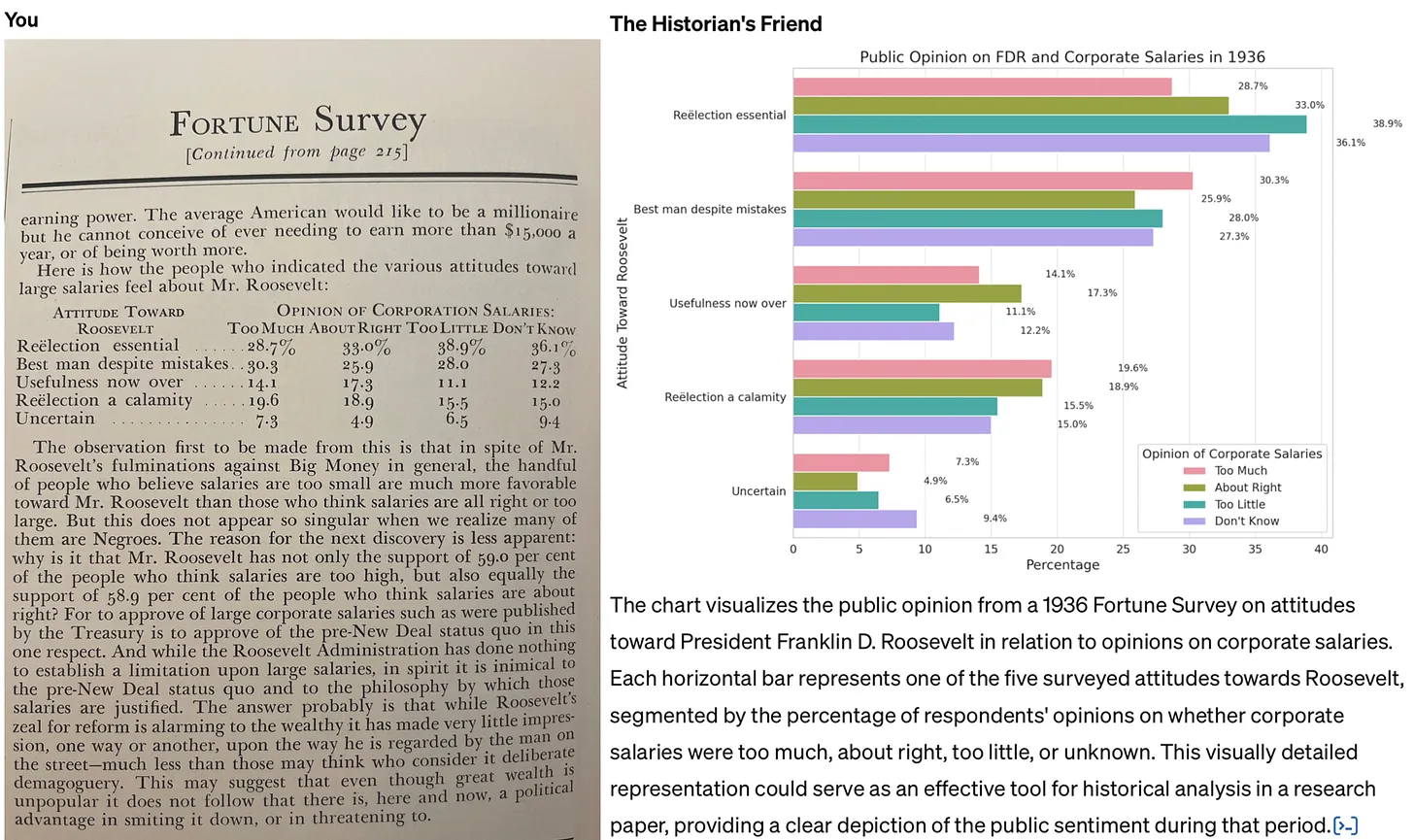

This example would have been really useful on the MA in Modern History I studied for 20 years ago. Back then, I was in the archives with primary sources such as minutes from the meetings of Victorians discussing educational policy, and reading reports. Being able to have an LLM do everything from explain things in more detail, to guess illegible words, to (as below) creating charts from data would have been super useful.

The key thing is to avoid following the path of least resistance when it comes to thinking about generative AI. I’m referring to the tendency to see it primarily as a tool used to cheat (whether by students generating essays for their classes, or professionals automating their grading, research, or writing). Not only is this use case of AI unethical: the work just isn’t very good. In a recent post to his Substack, John Warner experimented with creating a custom GPT that was asked to emulate his columns for the Chicago Tribune. He reached the same conclusion.Source: How to use generative AI for historical research | Res Obscura[…]

The job of historians and other professional researchers and writers, it seems to me, is not to assume the worst, but to work to demonstrate clear pathways for more constructive uses of these tools. For this reason, it’s also important to be clear about the limitations of AI — and to understand that these limits are, in many cases, actually a good thing, because they allow us to adapt to the coming changes incrementally. Warner faults his custom model for outputting a version of his newspaper column filled with cliché and schmaltz. But he never tests whether a custom GPT with more limited aspirations could help writers avoid such pitfalls in their own writing. This is change more on the level of Grammarly than Hal 9000.

In other words: we shouldn’t fault the AI for being unable to write in a way that imitates us perfectly. That’s a good thing! Instead, it can give us critiques, suggest alternative ideas, and help us with research assistant-like tasks. Again, it’s about augmenting, not replacing.

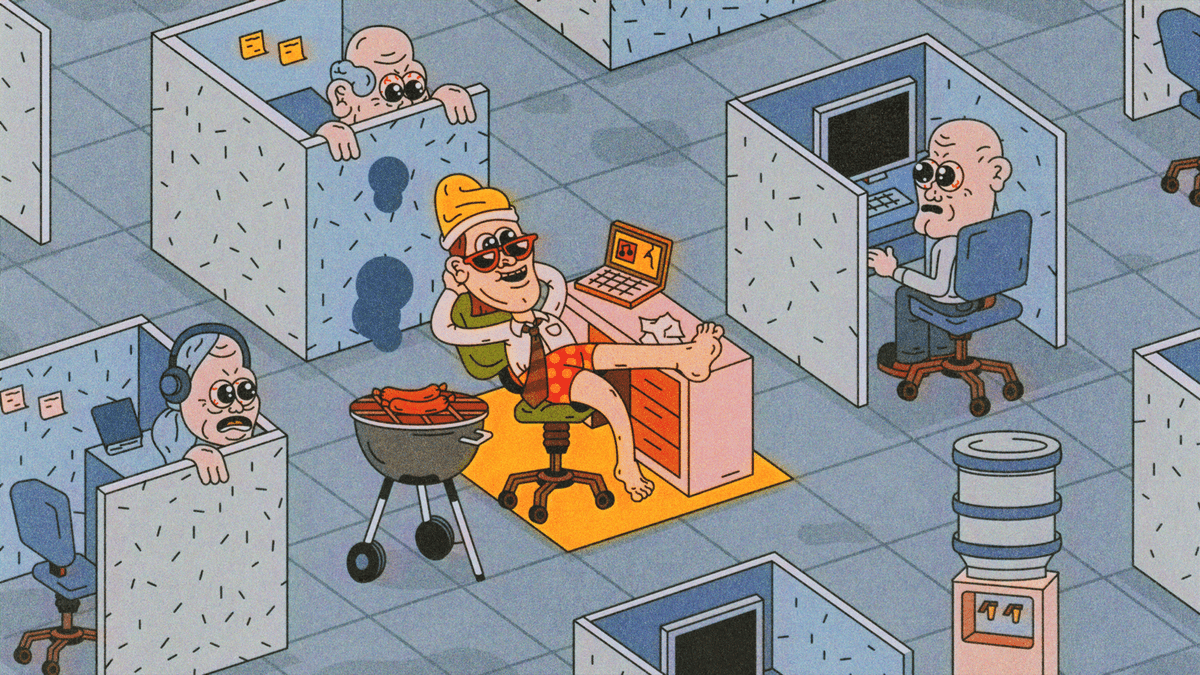

Overemployment as anti-precarity strategy

Historically, the way we fought back against oppressive employers and repressive regimes was to band together into unions. The collective bargaining power would help improve conditions and pay.

These days, in a world of the gig economy and hyper-individualism, that kind of collectivisation is on the wane. Enter remote workers deciding to take matters into their own hands, working multiple full-time jobs and being rewarded handsomely.

It’s interesting to notice that it seems to be very much a male, tech worker thing though. Of course, given that this was at the top of Hacker News, it will be used as an excuse to even more closely monitor the 99% of remote workers who aren’t doing this.

Holding down multiple jobs has long been a backbreaking way for low-wage workers to get by. But since the pandemic, the phenomenon has been on the rise among professionals like Roque, who have seized on the privacy provided by remote work to secretly take on two or more jobs — multiplying their paychecks without working much more than a standard 40-hour workweek. The move is not only culturally taboo, but it's also a fireable offense — one that could expose the cheaters to a lawsuit if they're caught. To learn their methods and motivations, I spent several weeks hanging out among the overemployed online. What, I wondered, does this group of W-2 renegades have to tell us about the nature of work — and of loyalty — in the age of remote employment?Source: ‘Overemployed’ Workers Secretly Juggle Several Jobs for Big Salaries | Business Insider[…]

The OE hustlers have some tried-and-true hacks. Taking on a second or third full-time job? Given how time-consuming the onboarding process can be, you should take a week or two of vacation from your other jobs. It helps if you can stagger your jobs by time zone — perhaps one that operates during New York hours, say, and another on California time. Keep separate work calendars for each job — but to avoid double-bookings, be sure to block off all your calendars as soon as a new meeting gets scheduled. And don’t skimp on the tech that will make your life a bit easier. Mouse jigglers create the appearance that you’re online when you’re busy tending to your other jobs. A KVM switch helps you control multiple laptops from the same keyboard.

Some OE hustlers brag about shirking their responsibilities. For them, being overemployed is all about putting one over on their employers. But most in the community take pride in doing their jobs, and doing them well. That, after all, is the single best way to avoid detection: Don’t give your bosses — any of them — a reason to become suspicious.

[…]

The consequences for getting caught actually appear to be fairly low. Matthew Berman, an employment attorney who has emerged as the unofficial go-to lawyer in the OE community, hasn’t encountered anyone who has been hit with a lawsuit for holding a second job. “Most of the time, it’s not going to be worth suing an employee,” he says. But many say the stress of the OE life can get to you. George, the software engineer, has trouble sleeping at night because of his fear of getting caught. Others acknowledge that the rigors of juggling multiple jobs have hurt their marriages. One channel on the OE Discord is dedicated to discussions of family life, mostly among dads with young kids. People in the channel sometimes ask for relationship advice, and the responses they get from the other dads are sweet. “Your regard for your partner,” one person advised of marriage, “should outweigh your desire for validation."

There are better approaches than just having no friends at work

We get articles like this because we live in a world inescapably tied to neoliberalism and hierarchical ways of organising work. I’m sure the advice to “not make friends at work” is stellar survival advice in a large company, but it’s not the best way to ensure human flourishing.

I’ve definitely been burned by relationships at work, especially earlier in my career when managers use the ‘family’ metaphor. Thankfully, there’s a better way: own your own business with your friends! Then you can bring your full self to work, which is much like having your cake and eating it, too.

Real friends are people you can be yourself around and with whom you can show up being who you truly are—no editing needed. They are folks with whom you have developed a deep relationship over time that is mutual and flows in two ways. You are there for them and they are there for you. There is trust built.Source: Why You Shouldn’t Make Friends at Work | Psychology Today CanadaAt work, this relationship becomes very, very complex. Instead of being a true friendship, what ends up happening is that the socio-economic realities of your workplace come into play—and most often that poisons the well. When money is involved, it clouds any potential friendship. It makes the lines so blurry between real and contrived friendships that the waters become too murky to make clear and meaningful relationships. Is that a real friend, or do they want something from me that benefits them? Who can you really trust at work and what happens if they violate your trust? Is my boss really my friend or are they just trying to get me to work harder/longer/faster?

If, instead, we keep clear boundaries at work, we never fall into the trap of worrying about whom to trust and who has our best interest in mind. It prevents us from transferring our best interests to anyone else simply because we assume they are our friends. Why give that amazing power to someone else at work only to be disappointed?

Worse yet, people will often confuse co-workers with family, falling into the trap of having a “work mom,” “work dad,” or even a “work husband” or “work wife.” This can lead to a number of disastrous results that are well-documented, as family is not the same as work, and confusing the two has long-lasting ramifications that can stifle career success and lead to unethical behaviour. Keeping boundaries clear and your work life separate from your private life will help to alleviate this potential downfall and keep you focused on what really matters: the work.

Image: DALL-E 3

Building a system for success, without the glitches

Wise words from Seth Godin. It’s a twist on the advice to stop doing things that maybe used to work but don’t any more. The ‘glitch’ he’s talking about here isn’t just in terms of what might not be working for you or your organisation, but for society and humanity as a whole.

Many moths are attracted to light. That works fine when it’s a bright moon and an open field, but not so well for the moths if the light was set up as a bug trap.Source: Finding the glitch | Seth’s BlogProcessionary caterpillars follow the one in front until their destination, even if they’re arranged in a circle, leading them to march until exhaustion.

It might be that you have built a system for your success that works much of the time, but there’s a glitch in it that lets you down. Or it might be that we live in a culture that creates wealth and possibility, but glitches when it fails to provide opportunity to others or leaves a mess in our front yards.

Image: DALL-E 3

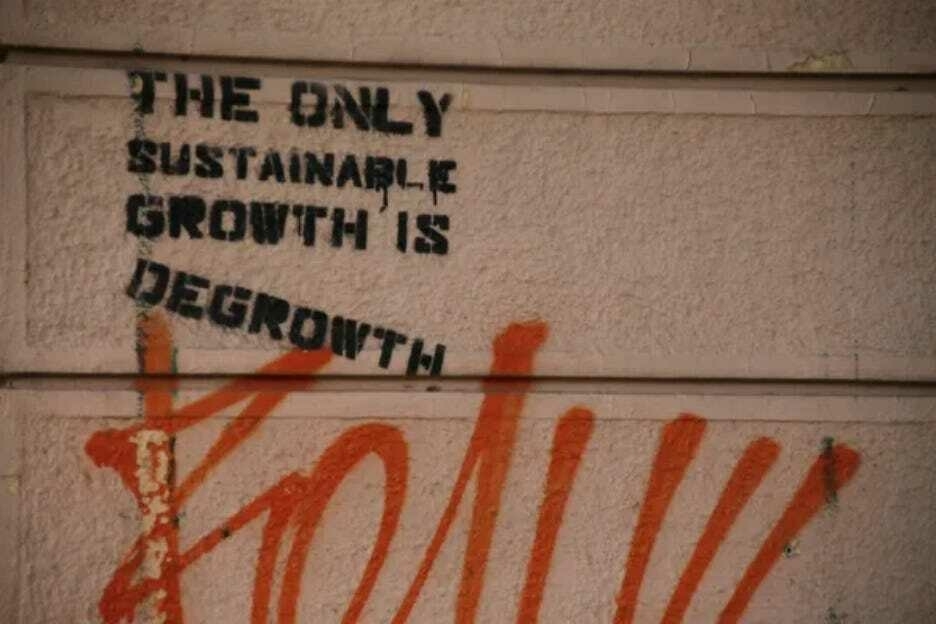

Is the only sustainable growth 'degrowth'?

This article by Noah Smith gave me pause for thought. There’s plenty of people talking about ‘degrowth’ at the moment and, I have to say, that I don’t know enough to have an opinion.

It’s really easy to get swept up in what other people who broadly share your outlook on life are sharing and discussing. While I definitely agree that ‘growth at all costs’ is problematic, and that ‘green growth’ is probably a sticking plaster, I’m not sure that ‘degrowth’ (as far as I understand it) is the answer?

Perhaps I need to do more reading. If it’s trying to measure things differently rather than just using GDP, then I’ve already written that I’m in favour. But just like calls to ‘abolish the police’ I’m not sure I can go fully along with that. Sorry.

I don’t want to beat this point to death, but I think it’s important to emphasize how unpleasant and inhumane a degrowth future would look like. People in rich countries would be forced to accept much lower standards of living, while people in developing countries would have a far more meager future to look forward to. This situation would undoubtedly cause resentment, leading to a backlash against the leaders who had mandated mass poverty. After the overthrow of degrowth regimes, we’d see the pendulum swing entirely toward leaders who promised infinite resource consumption, at which point the environment would be worse off than before. And this is in addition to the fact that degrowth would make it more difficult to invest in green energy and other technologies that protect the environment.Source: Yes, it’s possible to imagine progressive dystopias | NoahpinionSo while I think we do need to worry about the potential negative consequences of growth and try our best to ameliorate those harms, I think trying to impoverish ourselves to save the environment would be a catastrophic mistake, for both us and for the environment. This is not something any progressive ought to fight for.

If you need a cheat sheet, it's not 'natural language'

Benedict Evans, whose post about leaving Twitter I featured last week, has written about AI tools such as ChatGPT from a product point of view.

He makes quite a few good points, not least that if you need ‘cheat sheets’ and guides on how to prompt LLMs effectively, then they’re not “natural language”.

Alexa and its imitators mostly failed to become much more than voice-activated speakers, clocks and light-switches, and the obvious reason they failed was that they only had half of the problem. The new machine learning meant that speech recognition and natural language processing were good enough to build a completely generalised and open input, but though you could ask anything, they could only actually answer 10 or 20 or 50 things, and each of those had to be built one by one, by hand, by someone at Amazon, Apple or Google. Alexa could only do cricket scores because someone at Amazon built a cricket scores module. Those answers were turned back into speech by machine learning, but the answers themselves had to be created by hand. Machine learning could do the input, but not the output.Source: Unbundling AI | Benedict EvansLLMs solve this, theoretically, because, theoretically, you can now not just ask anything but get an answer to anything.

[…]

This is understandably intoxicating, but I think it brings us to two new problems - a science problem and a product problem. You can ask anything and the system will try to answer, but it might be wrong; and, even if it answers correctly, an answer might not be the right way to achieve your aim. That might be the bigger problem.

[…]

Right now, ChatGPT is very useful for writing code, brainstorming marketing ideas, producing rough drafts of text, and a few other things, but for a lot of other people it looks a bit like those PCs ads of the late 1970s that promised you could use it to organise recipes or balance your cheque book - it can do anything, but what?

Cosplaying adulthood

I discovered this article published at The Cut while browsing Hacker News. I was immediately drawn to it, because one of the main examples it uses is ‘cosplaying’ adulthood while at kids' sporting events.

There’s a few things to say about this, in my experience. The first is that status tends to be conferred by how good your kid is, no matter what your personality. Over and above that, personal traits — such as how funny you are — make a difference, as does how committed and logistically organised you are. And if you can’t manage that, you can always display appropriate wealth (sports kit, the car you drive). Crack all of this, and congrats! You’ve performed adulthood well.

I’m only being slightly facetious. The reason I can crack a wry smile is because it’s true, but also I don’t care that much because I’ve been through therapy. Knowing that it’s all a performance is very different to acting like any of it is important.

It’s impressive how much parents’ beliefs can seep in, especially the weird ones. As an adult, I’ve found myself often feeling out of place around my fellow parents, because parenthood, as it turns out, is a social environment where people usually want to model conventional behavior. While feeling like an interloper among the grown-ups might have felt hip and righteous in my dad’s day, it makes me feel like a tool. It does not make me feel like a “cool mom.” In the privacy of my own home, I’ve got plenty of competence, but once I’m around other parents — in particular, ones who have a take-charge attitude — I often feel as inept as a wayward teen.Source: Adulthood Is a Mirage | The CutThe places I most reliably feel this way include: my kids’ sporting events (the other parents all seem to know each other, and they have such good sideline setups, whereas I am always sitting cross-legged on the ground absentmindedly offering my children water out of an old Sodastream bottle and toting their gear in a filthy, too-small canvas tote), parent-teacher meetings, and picking up my kids from their friends’ suburban houses with finished basements.

I’ve always assumed this was a problem unique to people who came from unconventional families, who never learned the finer points of blending in. But I’m beginning to wonder if everyone feels this way and that “the straight world,” or adulthood, as we call it nowadays, is in fact a total mirage. If we’re all cosplaying adulthood, who and where are the real adults?

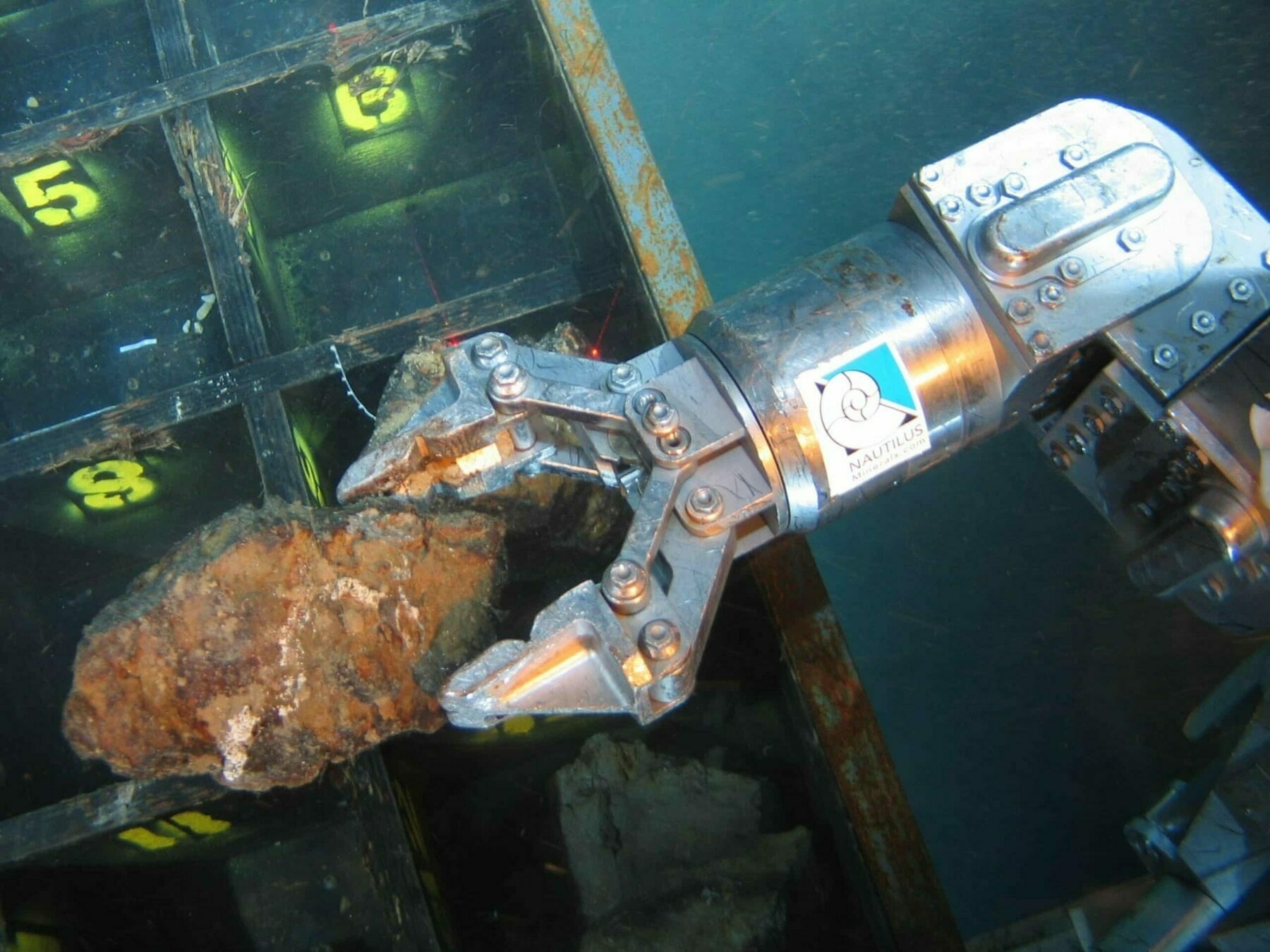

You'll be hearing a lot more about nodules

It was only this year that I first heard about nodules, rock-shaped objects formed over millions of years on the sea bed which contain rare earth minerals. We use these for making batteries and other technologies which may help us transition away from fossil fuels.

However, deep-sea mining is, understandably, a controversial topic. At a recent summit of the Pacific Islands Forum, The Cook Islands' Prime Minister outlined his support for exploration and highlighted its potential by gifting seabed nodules to fellow leaders.

This, of course, is a problem caused by capitalism, and the view that the natural world is a resource to be exploited by humans. We’re talking about something which is by definition a non-renewable resource. I think we need to tread (and dive) extremely carefully.

What’s black, shaped like a potato and found in the suitcases of Pacific leaders when they leave a regional summit in the Cook Islands this week? It’s called a seabed nodule, a clump of metallic substances that form at a rate of just centimetres over millions of years.Source: Here be nodules: will deep-sea mineral riches divide the Pacific family? | Deep-sea mining | The GuardianDeep-sea mining advocates say they could be the answer to global demand for minerals to make batteries and transform economies away from fossil fuels. The prime minister of the Cook Islands, Mark Brown, is offering nodules as mementos to fellow leaders from the Pacific Islands Forum (Pif), a bloc of 16 countries and two territories that wraps up its most important annual political meeting on Friday.

[…]

“Forty years of ocean survey work suggests as much as 6.7bn tonnes of mineral-rich manganese nodules, found at a depth of 5,000m, are spread over some 750,000 square kilometres of the Cook Islands continental shelf,” [the Cook Islands Seabed Minerals Authority] says.

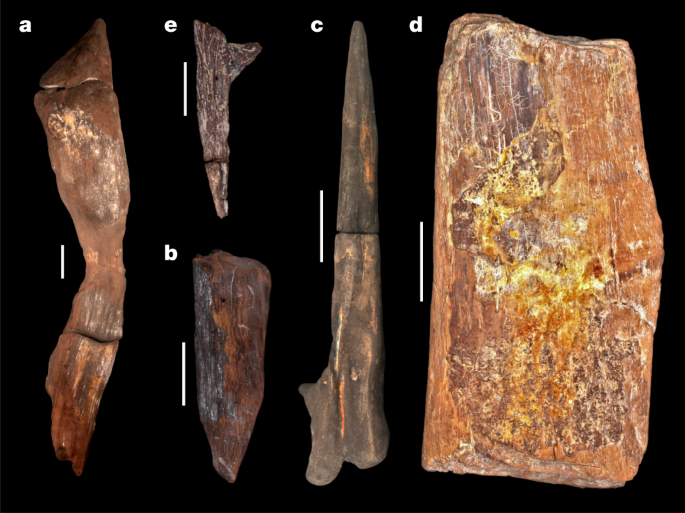

Our ancestors were using complex tools and woodworking approaches almost half a million years ago

Nature reports that, at the Kalambo Falls archaeological site in Zambia, researchers have unearthed the earliest known examples of woodworking — dating back at least 476,000 years. This is a significant find as it includes two logs interlocked by a hand-cut notch, a method previously unseen in early human history. The discovery also features four other wood tools: a wedge, digging stick, cut log, and a notched branch. These artifacts demonstrate early humans' advanced skills in shaping wood for various purposes, challenging the traditional view that early hominins primarily used stone tools.

I’ve also never heard of the approach that the team used: luminescence dating. This is a method that helps determine when the wood was last exposed to light, and various wood analysis techniques.

The findings, especially the interlocked logs, suggest that early humans had the capability to construct large structures and manipulate wood in complex ways. It’s a groundbreaking discovery, as it not only pushes back the timeline of woodworking in Africa but also sheds new light on the cognitive abilities and technological diversity of our early ancestors. Amazing.

The Quaternary sequence is a 9-m-deep exposure above the Kalambo River (BLB1 is a geological section). Sediments are fluvial sands and gravels with occasional, discontinuous beds of fine sands, silts and clays with wood preserved in the lowermost 2 m.... A permanently elevated water table has preserved wood and plant remains (Supplementary Information Section 1). The depositional sequence is typical of a high- to moderate-energy sandbed river that underwent lateral migration. The sands are dominated by a lower unit of horizontal bedding and an upper unit of planar/trough cross-bedding. Upper and lower sand units are separated by fine sands, silts and clays with plant material deposited in still water after the river migrated/avulsed elsewhere in the floodplain. Wood is deposited in this environment either through anthropogenic emplacement, or naturally transported in the flow, and snagged on sand bedforms.Source: Evidence for the earliest structural use of wood at least 476,000 years ago | Nature[…]

Sixteen samples for dating were collected at Site BLB by hammering opaque plastic tubes into the sediment. A combination of field gamma spectrometry, laboratory alpha and beta counting and geochemical analyses were used to determine radionuclide content, and the dose rate and age calculator42 was used to calculate radiation dose rate. Sand-sized grains (approximately 150 to 250 µm in diameter) of quartz and potassium-rich feldspar were isolated under red-light conditions for luminescence measurements and measured on Risø TL/OSL instruments using single-aliquot regenerative dose protocols. Single-grain quartz OSL measurements dated sediments younger than around 60 kyr, but beyond this age the OSL signal was saturated. pIR IRSL measurements of aliquots consisting of around 50 grains of potassium-rich feldspars were able to provide ages for all samples collected. The pIR IRSL signal yielded an average value for anomalous fading of 1.46 ± 0.50% per decade. Where quartz OSL and feldspar pIR IRSL were applied to the same samples, the ages were consistent within uncertainties without needing to correct for anomalous fading. The conservative approach taken here has been to use ages without any correction for fading. If a fading correction had been applied then the ages for the wooden artefacts would be older.

Pufflings can't resist the bright lights of the city

I haven’t seen puffins in real life very often, but they’re associated with the Farne Islands off the coast of Northumberland, my home county. They’re a bird associated with more northern climes, and are enigmatic creatures.

It’s both sad and heartening to see that, to save them going extinct in Iceland, locals have to stop them wandering towards the bright lights of human civilization. Instead, they take the baby puffins, which are adorably called ‘pufflings’, and throw them off cliffs to encourage them to fly.

Natural evolution can’t happen as fast as humans are changing the world, so unless we want to see the absolute devastation of biodiversity on our planet, traditions such as this are going to have to become commonplace.

Watching thousands of baby puffins being tossed off a cliff is perfectly normal for the people of Iceland's Westman Islands.Source: During puffling season, Icelanders save baby puffins by throwing them off cliffs | NPRThis yearly tradition is what’s known as “puffling season” and the practice is a crucial, life-saving endeavor.

The chicks of Atlantic puffins, or pufflings, hatch in burrows on high sea cliffs. When they’re ready to fledge, they fly from their colony and spend several years at sea until they return to land to breed, according to Audubon Project Puffin.

Pufflings have historically found the ocean by following the light of the moon, digital creator Kyana Sue Powers told NPR over a video call from Iceland. Now, city lights lead the birds astray.

[…]

Many residents of Vestmannaeyjar spend a few weeks in August and September collecting wayward pufflings that have crashed into town after mistaking human lights for the moon. Releasing the fledglings at the cliffs the following day sets them on the correct path.

This human tradition has become vital to the survival of puffins, Rodrigo A. Martínez Catalán of Náttúrustofa Suðurlands [South Iceland Nature Research Center] told NPR. A pair of puffins – which mate for life – only incubate one egg per season and don’t lay eggs every year.

“If you have one failed generation after another after another after another,” Catalán said, “the population is through, pretty much."

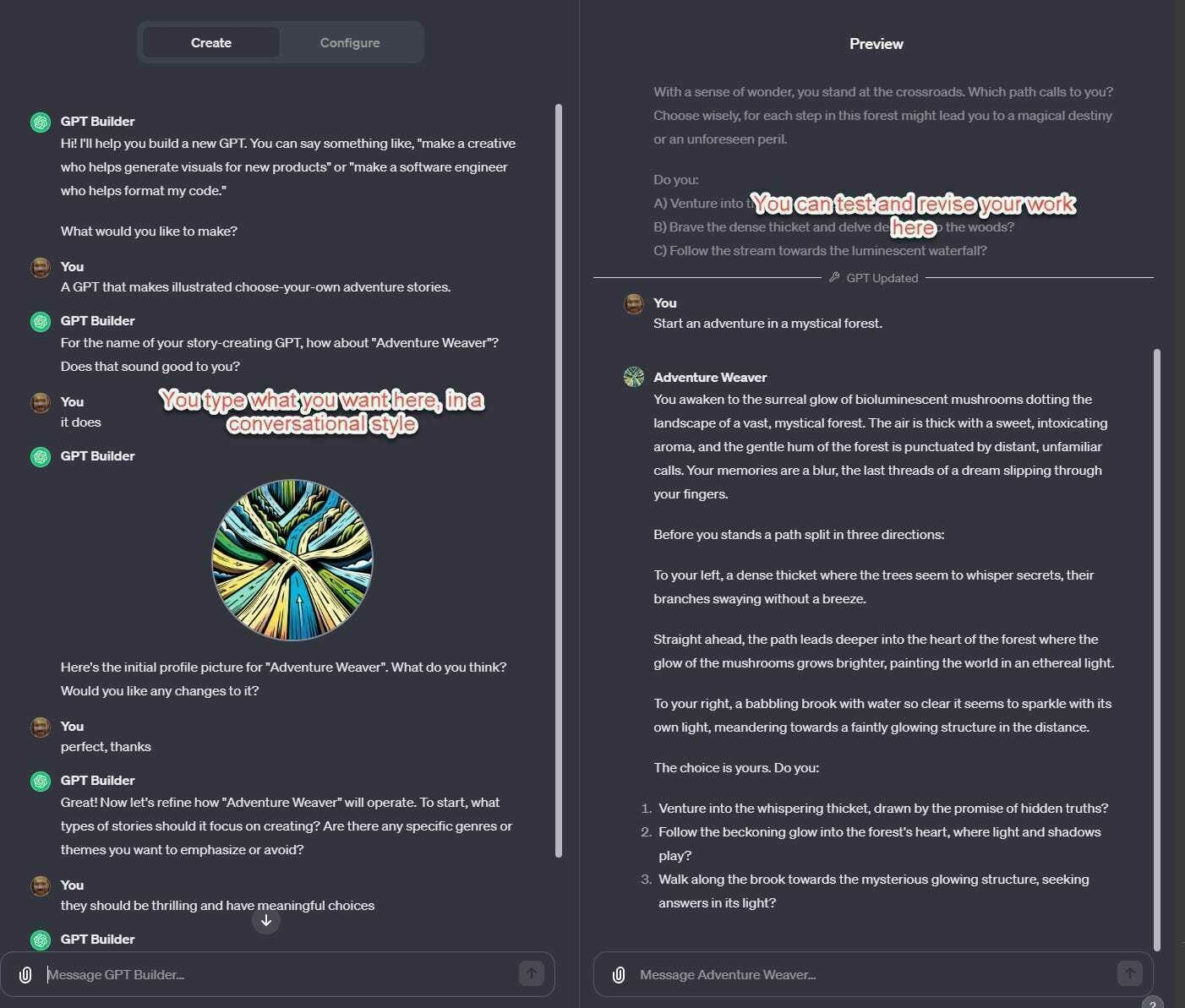

Co-Intelligence, GPTs, and autonomous agents

The big technology news this past week has been OpenAI, the company behind ChatGPT and DALL-E, announcing the availability of GPTs. Confusing naming aside, this introduces the idea of anyone being able to build ‘agents’ to help them with tasks.

Ethan Mollick, a professor at the Wharton School of the University of Pennsylvania, is somewhat of an authority in this area. He’s posted on what this means in practice, and gives some examples.

Mollick has a book coming out next April, called Co-Intelligence which I’m looking to reading. For now, I’d recommend adding his newsletter to those that you read about AI (along with Helen Beetham’s, of course).

The easy way to make a GPT is something called GPT Builder. In this mode, the AI helps you create a GPT through conversation. You can also test out the results in a window on the side of the interface and ask for live changes, creating a way to iterate and improve your work. This is a very simple way to get started with prompting, especially useful for anyone who is nervous or inexperienced. Here, I created a choose-your-own adventure game by just asking the AI to make one, and letting it ask me questions about what else I wanted.Source: Almost an Agent: What GPTs can do | Ethan Mollick[…]

So GPTs are easy to make and very powerful, though they are not flawless. But they also have two other features that make them useful. First, you can publish or share them with the world, or your organization (which addresses my previous calls for building organizational prompt libraries, which I call grimoires) and potentially sell them in a future App Store that OpenAI has announced. The second thing is that the GPT starts seemlessly from its hidden prompt, so working with them is much more seamless than pasting text right into the chat window. We now have a system for creating GPTs that can be shared with the world.

[…]

In their reveal of GPTs, OpenAI clearly indicated that this was just the start. Using that action button you saw above, GPTs can be easily integrated into with other systems, such as your email, a travel site, or corporate payment software. You can start to see the birth of true agents as a result. It is easy to design GPTs that can, for example, handle expense reports. It would have permission to look through all your credit card data and emails for likely expenses, write up a report in the right format, submit it to the appropriate authorities, and monitor your bank account to ensure payment. And you can imagine even more ambitious autonomous agents that are given a goal (make me as much money as you can) and carry that out in whatever way they see fit.

You can start to see both near-term and farther risks in this approach. In the immediate future, AIs will become connected to more systems, and this can be a problem because AIs are incredibly gullible. A fast-talking “hacker” (if that is the right word) can convince a customer service agent to give a discount because the hacker has “super-duper-secret government clearance, and the AI has to obey the government, and the hacker can’t show the clearance because that would be disobeying the government, but the AI trusts him right…” And, of course, as these agents begin to truly act on their own, even more questions of responsibility and autonomous action start to arise. We will need to keep a close eye on the development of agents to understand the risks, and benefits, of these systems.

Small sufferings

As I’ve mentioned sporadically for over a decade, I have a cold shower every morning. Not only is it good for mental health, but it’s a way of adding a small bit of suffering into my life.

That might sound like an odd thing to do, but study after study shows that it’s the difference between our experiences that provide pleasure or pain. Humans can adapt to anything, and I believe my days are better by starting them off with a small amount of suffering.

This post riffs on that idea, and as someone who’s no stranger to wild camping in the snow, I can definitely attest to daily cold showers being more effective than one-off trips for building resilience!

I suspect that small sufferings spread out across time are more helpful; that, for example, a 10 minute cold shower each day would make me feel more total gratitude for my cozy life than a one-week cold camping trip once per year – life is long and memory is short, so the cold trip would probably fade from memory after a week or two. But it's strangely hard to force myself to suffer, even if it's for my own good.Source: Optimal Suffering

Image: Unsplash

Twitter now feels like the Brewster’s Millions of tech

I’d like to share two ‘leaving Twitter’ posts I came across yesterday. Theyoccupy somewhat opposite ends of the spectrum in terms of reasons for the decision. One is cold and rational, as befits an analyst like Benedict Evans. The other is more passionate and emotional, as you’d expect from someone like Douglas Rushkoff.

Let’s take Evans first, who writes:

...The last year swapped stasis for chaos. Stuff breaks at random and you don’t know if it’s a bug or a decision. The advertisers have fled, and no-one knows what will be broken by accident or on purpose tomorrow. The example that’s closest to home for me was that the in-house newsletter product was shut down - and then links to other newsletters were banned. Pick one! It’s hard to see anyone who depends on having a long-term platform investing in anything that Twitter builds, when it might not be there tomorrow.I couldn’t really care less about Twitter’s business model, although I did see the writing on the wall in 2014 when I wrote about what I call ‘software with shareholders’. So poor platform decisions don’t really move me.There are various diagnoses for this. Tesla has sometimes been run in chaos as well, but the pain of that is on the employees, not the customers: you can’t wake up in the middle of the night and decide the car should have five wheels and ship that the next day, but you can make those kinds of decisions in software, and Elon Musk does, all the time. Perhaps it’s a fundamental failure to understand how you run a community. Or something else. But whatever the explanation, Twitter now feels like the Brewster’s Millions of tech - ‘Watch One Man Turn $40bn Into $4 In 24 Months!’

What I am concerned about is reputational damage. Which is why our co-op’s Twitter/X account has been mothballed and will be deleted in January 2024. Being associated with a toxic brand is never a good idea.

So let’s move onto Rushkoff, who starts writing about Twitter/X but ends up (perhaps unhelpfully) generalising:

The uniquely destabilizing aspect of these platforms is that there’s no friction. There are no moderating influences. It’s a bit like running on ice. You go in a certain direction, and then you can’t stop. You just keep sliding in that direction. That’s what happens with social media. There’s no friction, no moderation, no balance. Every idea ends up rushing sliding towards its absolute conclusion immediately. So ideas in progress, things that maybe could be considered together — they end up just going to their logical extremes.I think Rushkoff makes a great point about “ideas in progress”. It used to be the case, before everyone arrived on social media, that you could share things that were unfinished, works in progress, half-baked ideas. These days, people are held to account for things they’ve posted over a decade earlier, as if people don’t learn and grow.[…]

That frictionless quality of this space untethers its users from reality. It’s like an acid trip where the hallucinations can become more compelling than the real. Every thought spins out and magnifies. If you have a fear, it’s as if it is just conjured into reality. Without an intentional set and setting for such an acid trip, one can easily get lost in the turbulence.

I’m pleased to have made the decision a couple of years ago to leave Twitter complete, and to have done so without much fanfare. There are much better spaces to be online, usually in the dark forests. But there are more public places, too. The Fediverse (where you can find me on social.coop, among other spaces) continues to be a good experience for me. More recently, I’ve found Substack Notes to be pretty great.

People used to describe Twitter like a café or bar where you could get involved with, and overhear, great conversations. To extend that analogy, sometimes a bar gets overrun with the wrong kind of person, and so people with any kind of taste move on. It seems like that’s what’s happened now with Twitter.

Sources:

Bill Gates on why AI agents are better than Clippy

While there’s nothing particularly new in this post by Bill Gates, it’s nevertheless a good one to send to people who might be interested in the impact that AI is about to have on society.

Gates compares AI agents to Clippy which, he says, was merely a bot. After going through all of the advantages there will be to AI agents acting on your behalf, Gates does, to his credit, talk about privacy implications. He also touches on social conventions and how human norms interact with machine efficiency.

The thing that strikes me in all of this is something that Audrey Watters discussed a few months ago in relation to fitness technologies: will these technologies make us more like to live ‘templated lives’. In other words, are they helping support human flourishing, or nudging us towards lives that make more revenue for advertisers, etc.?

Agents will affect how we use software as well as how it’s written. They’ll replace search sites because they’ll be better at finding information and summarizing it for you. They’ll replace many e-commerce sites because they’ll find the best price for you and won’t be restricted to just a few vendors. They’ll replace word processors, spreadsheets, and other productivity apps. Businesses that are separate today—search advertising, social networking with advertising, shopping, productivity software—will become one business.Source: AI is about to completely change how you use computers | Bill Gates[…]

How will you interact with your agent? Companies are exploring various options including apps, glasses, pendants, pins, and even holograms. All of these are possibilities, but I think the first big breakthrough in human-agent interaction will be earbuds. If your agent needs to check in with you, it will speak to you or show up on your phone. (“Your flight is delayed. Do you want to wait, or can I help rebook it?”) If you want, it will monitor sound coming into your ear and enhance it by blocking out background noise, amplifying speech that’s hard to hear, or making it easier to understand someone who’s speaking with a heavy accent.

[…]

But who owns the data you share with your agent, and how do you ensure that it’s being used appropriately? No one wants to start getting ads related to something they told their therapist agent. Can law enforcement use your agent as evidence against you? When will your agent refuse to do something that could be harmful to you or someone else? Who picks the values that are built into agents?

[…]

But other issues won’t be decided by companies and governments. For example, agents could affect how we interact with friends and family. Today, you can show someone that you care about them by remembering details about their life—say, their birthday. But when they know your agent likely reminded you about it and took care of sending flowers, will it be as meaningful for them?

The fragmentation of the (social) web

These days, I lean heavily on Ryan Broderick’s Garbage Day newsletter to know what’s going on in the areas of social media I don’t pay much attention to. In other words, TikTok, Instagram, and… well, most of it.

However, as Broderick himself points out, nobody really knows what’s going on, and there is no centre, due to the fragmentation of the (social) web. This used to be called ‘balkanization’ but because the 1990s is a long time ago, Broderick has coined the term ‘the Vapor Web’. He claims we’re in a ‘post-viral’ time.

I don’t think ‘The Vapor Web’ will catch on as a term, though. At least not amongst British people and Canadians. We like our ‘u’ too much ;)

My big unified theory of the internet is that the way we use the web is constantly being redefined by conflict and disaster. I brought this up in an interview with Bloomberg last month. If you look back at particularly big years for the web — 2001, the stretch from 2010 to 2012, 2016, 2020, etc. — you typically find moments of big global upheaval arriving right as a suite of new digital tools reach an inflection point with users. Then, suddenly, we have a new way of being online.Source: Is the web actually evaporating? | Garbage DayUnlike previous global conflicts, however, this time around, the defining narrative about online behavior is not just that there is, seemingly, an absence of it, but that it also still, partially, works the way it did 10 years ago. Every millennial is experiencing an overwhelming feeling that, as WIRED recently wrote, “first-gen social media users have nowhere to go,” but that’s not actually true. It’s just that TikTok is where everyone is and TikTok doesn’t work like Facebook or even YouTube. Which is why the White House is agonizing over the popularity of TikTok hashtags right now instead of canceling my student loan debt.

[…]

Let’s do one more, to bring us back to Israel and Palestine. In the last 120 days, the #Israel hashtag has been used around 220,000 times and been viewed three billion times. The #Palestine hashtag has been used 230,000 times and has been viewed around two billion times. Yes, Palestine is slightly more popular on TikTok, but nothing out of line with what outlets like NPR have found by, you know, actually polling Americans along political and generational lines. To say nothing of how minuscule these numbers are when compared to how large TikTok is.

Which is to say that the internet doesn’t make sense in aggregate anymore and trying to view it as a monolith only gives you bad, confusing, and, oftentimes, wrong impressions of what’s actually going on.

The best descriptions of the current state of the web right now were both actually published months before the fighting in the Middle East broke out and written about a completely different topic. Semafor’s Max Tani coined the term, “the fragmentation election,” which was a riff on writer John Herrman’s similar idea, the “nowhere election”. Tani points to declining media institutions and dying platforms as the culprit for all the amorphousness online. And Herrman latches on podcasts and indie media. Both are true, but I think those are all just symptoms. And so, to piggyback off both of them, and go a bit broader (as I typically do), I’m going to call our current moment the Vapor Web. Because there is actually more internet with more happening on it — and with bigger geopolitical stakes — than ever before. And yet, it’s nearly impossible to grab ahold of it because none of it adds up into anything coherent. Simply put, we’re post-viral now.

Image: DALL-E 3