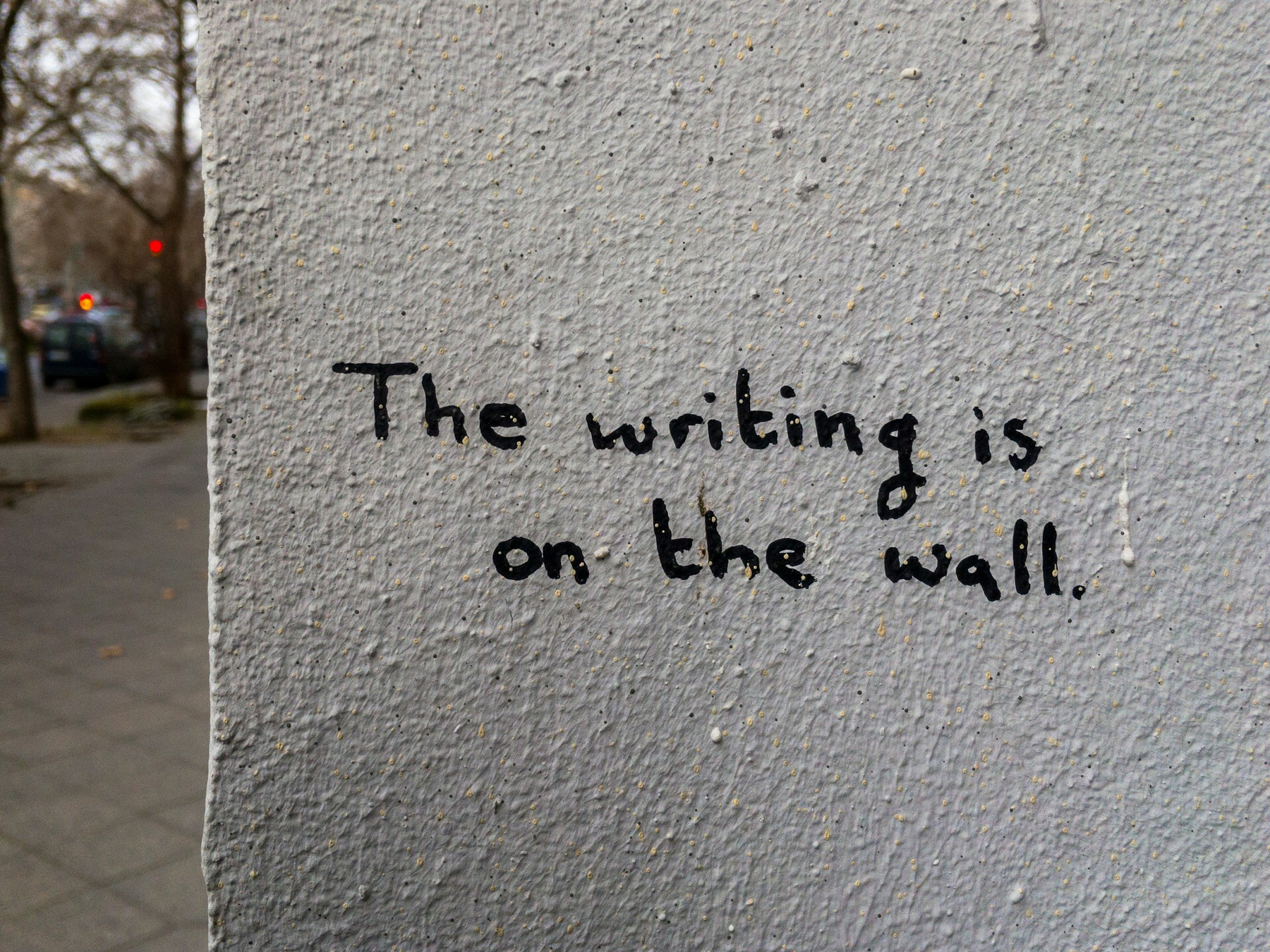

How long before run-on sentences are preferred to em-dashes?

An insightful post from Max Read about stylistic preferences with regards to human vs AI text. Every relevant technology changes writing and, in turn, literate culture.

In many contexts most people can (more or less) correctly differentiate between A.I.-generated output and its “authentic” counterpart–but cannot correctly attribute the output.

What’s funny about this is: We actually really want to prefer human-authored writing! In open-label tests, where the excerpts are shown with attribution, people consistently express preference for whatever text is labeled human, even when the text is actually A.I.-generated. (So do A.I. evaluators, as I learned at the conference from Wouter Haverals, to an even greater degree.)

This is not a particularly satisfying set of findings insofar as it validates neither the A.I.-booster “it’s so over, A.I. writing is better than human writing” side nor the A.I.-skeptic “A.I. can never write like a human” side. What we can say is that people mostly can’t identify A.I.-generated text as A.I.-generated (crowd boos), but they can sometimes distinguish between it and human-authored text (crowd cheers); it’s just that they tend to think the A.I.-generated text is human (crowd boos), maybe because human-generated text is stranger, worse, or more difficult (crowd hesitantly cheers), which readers mistakenly believe is more typical of A.I.-generated text (crowd silent now) and thereby disprefer (crowd sort of murmuring confusedly), unless you tell them it’s actually human, in which case they change their minds and like it (crowd has mostly left at this point).

But all of it taken together suggests that, given our strong bias in favor of writing we believe to be human, A.I. vs. human “preference” tests (or “reads better” quizzes) are often second-order “identification” tests, in each case measuring not “preference” per se but the accuracy of the prevailing heuristics for identifying A.I. writing. Participants in these studies, it would seem, express preference for the A.I.-generated writing not because it’s “better” in some formal sense–cleaner, simpler, more beautiful, whatever–but because their “flawed heuristics” have led them to the conclusion that it’s human-authored, and ipso facto better.

[…]

As long as people want to prefer human-authored to L.L.M.-generated writing, we will place a premium on whatever style we associate with human authorship–even as that style changes. You can already see this process beginning from the other direction on social networks like Twitter, where em-dashes and not-x-but-y contrastive corrections–perfectly innocuous and useful writerly tools which not five years ago would likely have been highly correlated with “good prose”–are immediately treated with derision and suspicion. By that same token, certain kinds of “bad writing” should be seen as evidence of human authorship. How long before run-on sentences are preferred to em-dashes?

L.L.M.s, of course, can and will get better at mimicking the “strangeness,” clunkiness, and badness of human prose; I’m skeptical of claims that there is some built-in technical limitation that prevents A.I. text from ever being truly indistinguishable from human prose. What seems more likely to me is that as L.L.M.s move away from the easily identifiable generic LinkedIn style that currently dominates, our preferences will move as well, in an attempt to stay one step ahead.

Source: Read Max

Image: Randy Tarampi