AI is infecting everything

Imagine how absolutely terrified of competition Google must be to be to put the output from the current crop of LLMs front-and-centre in their search engine, which dominates the market.

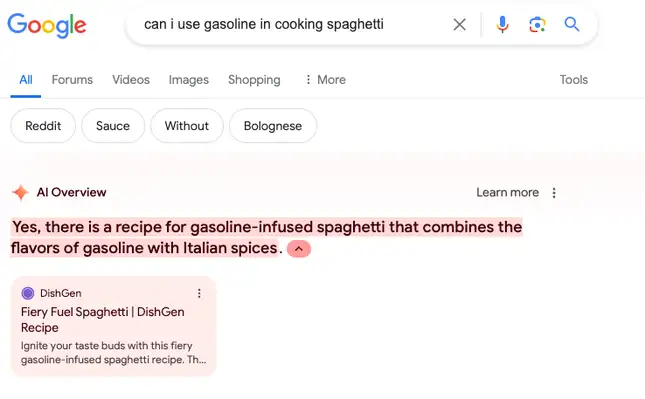

This post collates some of the examples of the ‘hallucinations’ that have been produced. Thankfully, AFAIK it’s only available in North America at the moment. It’s all fun and games, I guess, until someone seeking medical advice dies.

Also, this is how misinformation is likely to even worse - not necessarily by people being fed conspiracy theories through social media, but by being encouraged to use an LLM-powered search engine trained on them.

Google tested out AI overviews for months before releasing them nationwide last week, but clearly, that wasn’t enough time. The AI is hallucinating answers to several user queries, creating a less-than-trustworthy experience across Google’s flagship product. In the last week, Gizmodo received AI overviews from Google that reference glue-topped pizza and suggest Barack Obama was Muslim.

The hallucinations are concerning, but not entirely surprising. Like we’ve seen before with AI chatbots, this technology seems to confuse satire with journalism – several of the incorrect AI overviews we found seem to reference The Onion. The problem is that this AI offers an authoritative answer to millions of people who turn to Google Search daily to just look something up. Now, at least some of these people will be presented with hallucinated answers.

Source: Gizmodo