AI generated images in a time of war

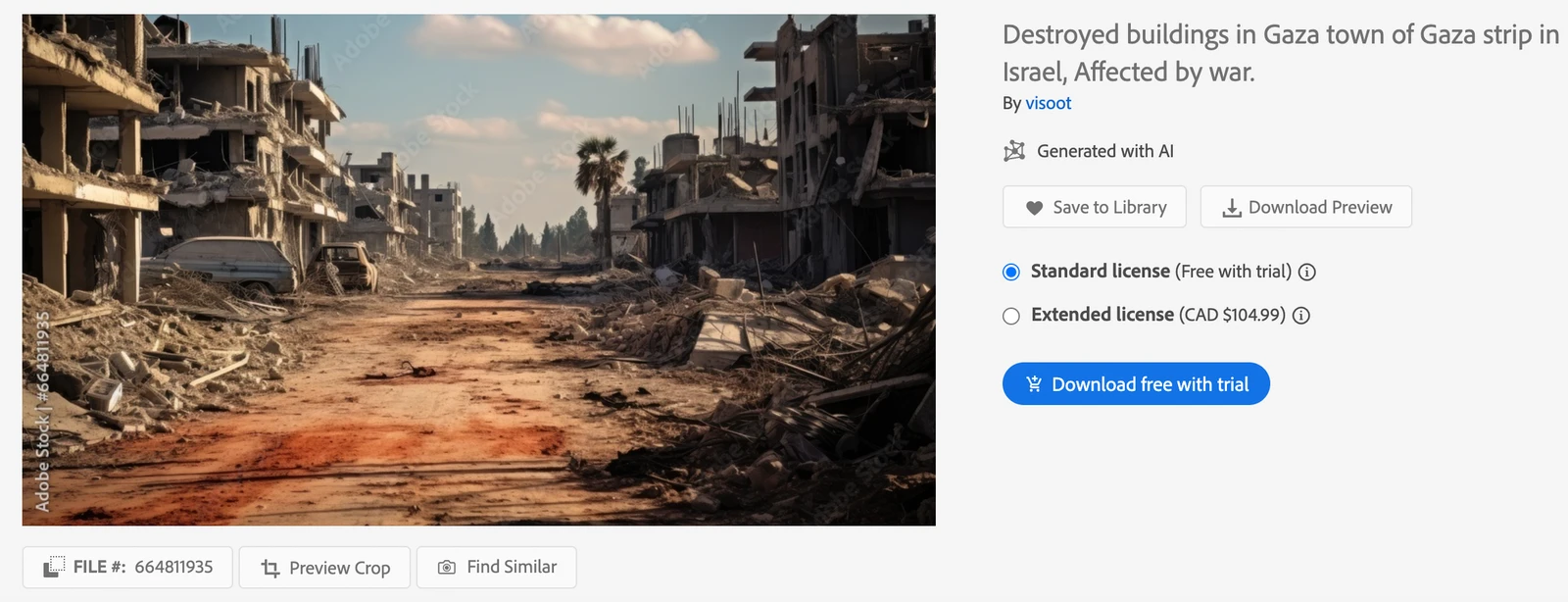

It’s one thing user-generated content being circulated around social media for the purposes of disinformation. It’s another thing entirely when Adobe’s stock image marketplace is selling AI-generated ‘photos’ of destroyed buildings in Gaza.

This article in VICE includes a comment from an Adobe spokesperson who references the Content Authenticity Initiative. But this just puts the problem on the user rather than the marketplace. People looking to download AI-generated images to spread disinformation, don’t care about the CAI, and will actively look for ways to circumvent it.

Adobe is selling AI-generated images showing fake scenes depicting bombardment of cities in both Gaza and Israel. Some are photorealistic, others are obviously computer-made, and at least one has already begun circulating online, passed off as a real image.Source: Adobe Is Selling AI-Generated Images of Violence in Gaza and Israel | VICEAs first reported by Australian news outlet Crikey, the photo is labeled “conflict between Israel and palestine generative ai” and shows a cloud of dust swirling from the tops of a cityscape. It’s remarkably similar to actual photographs of Israeli airstrikes in Gaza, but it isn’t real. Despite being an AI-generated image, it ended up on a few small blogs and websites without being clearly labeled as AI.

[…]

As numerous experts have pointed out, the collapse of social media and the proliferation of propaganda has made it hard to tell what’s actually going on in conflict zones. AI-generated images have only muddied the waters, including over the last several weeks, as both sides have used AI-generated imagery for propaganda purposes. Further compounding the issue is that many publicly-available AI generators are launched with few guardrails, and the companies that build them don’t seem to care.